In the world of cloud computing there are often multiple ways to achieve the same or similar result. In Oracle Cloud Infrastructure (OCI) logs are generated by the platform itself such as audit logs, OCI native services such as the Network Firewall Service, and custom logs from compute instances or your applications. These logs typically live in OCI logging where you can view them, or search them if required.

Collecting and storing logs is useful, however if you want to produce insights then you will need a way to analyse and visualise the log data. OCI Logging Analytics allows you to index, enrich, aggregate, explore, search, analyse, correlate, visualise and monitor all log data from your applications and system infrastructure.

From OCI logging there are two common ways in which logs can be ingested into Logging Analytics. The first is using a Service Connector to send logs to an Object Storage bucket, and an Object Collection Rule to then import the logs into Logging Analytics. The second option uses a Service Connector to send the logs directly to Logging Analytics. Both are valid options however require some consideration before use.

Using the object storage method means your logs will also be stored as files, and you will need to have appropriate policies to only allow authorised users to access them. You will also need to create a lifecycle policy otherwise you will end up with logs being retained indefinitely. In addition to the creation of object storage resources, you will also need an object collection event rule that triggers when a new file is created in the bucket. Logging Analytics uses this rule to know when to import new log data and into which log source. Depending on the service connector configuration, there may also be a delay from when the log visible in logging, is also visible in logging analytics. Ultimately this method is useful if you want to retain logs for a period of time, or wish to replicate / archive them for compliance or security purposes.

Using the service connector direct method to send logs from logging to logging analytics means your logs wont be stored in object storage so you won’t require a bucket or an additional event rule. The upside is that log delivery is typically faster as we’re not performing the additional object storage activities. However using this option with its default settings will result in all your logs being stored in the “OCI Unified Schema Logs” log source. Having only a single log source makes search queries, and ongoing log management more complicated.

Until recently I had preferred the object storage method, because it allowed me to easily separate my logs into different logical log sources within logging analytics. I need to thank @lab4geeks for showing me a better way, to use the more direct method and still keep my logs logically separate in different log sources.

If you’re looking for instructions about the object storage logging method, check out my other post Virus & Malware Scanning Object Storage.

How to preserve log sources in Logging Analytics

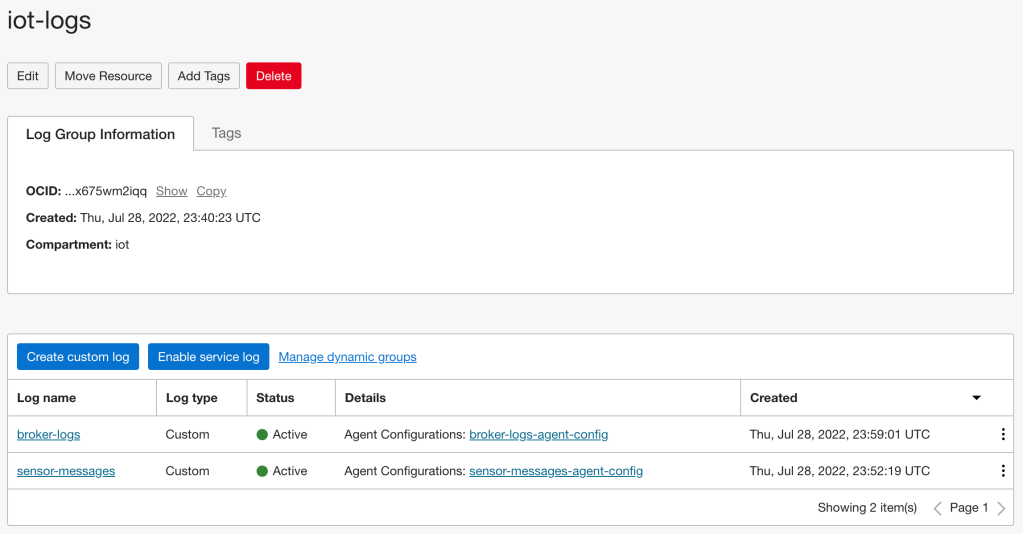

Before we begin you will already need logs in logging. In my example I’m using my iot-logs log group that has two custom logs:

- broker-logs, my Mosquitto server logs

- sensor-messages, the MQTT messages published to my Mosquitto server.

For each log source that you want to import into Logging Analytics we will need to create the required configuration. I’m going to do this for my sensor-messages log.

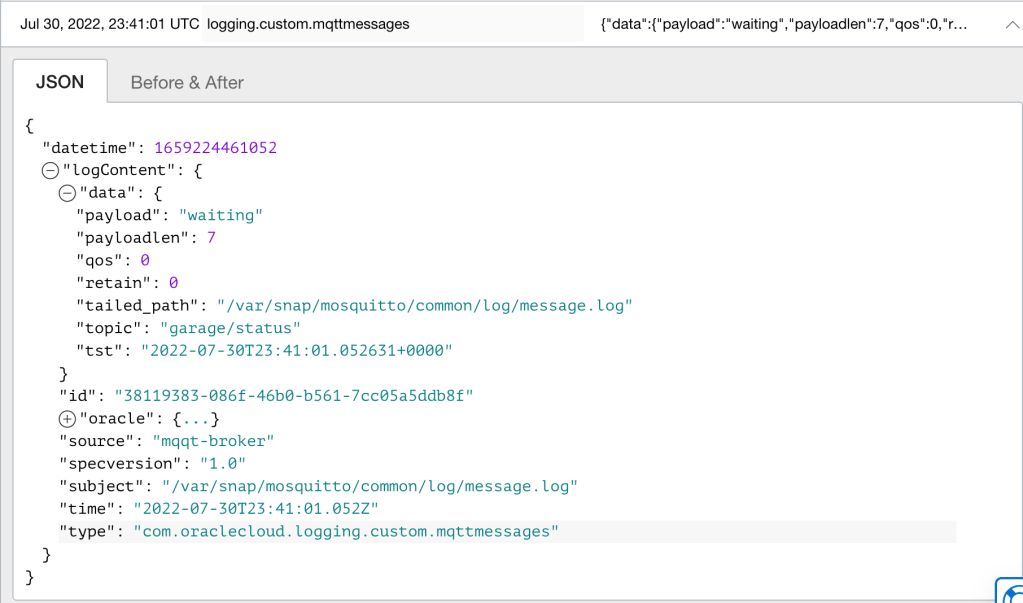

View a log entry, and note down the “type” key value.

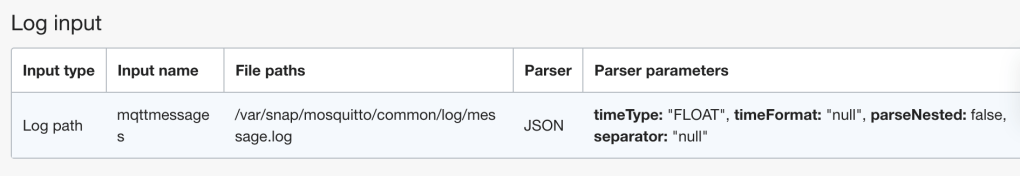

As my log is a custom log type, the value is com.oraclecloud.logging.custom.mqttmessages. The mqttmessages value is appended to the prefix com.oraclecloud.logging.custom and set in my agent configuration:

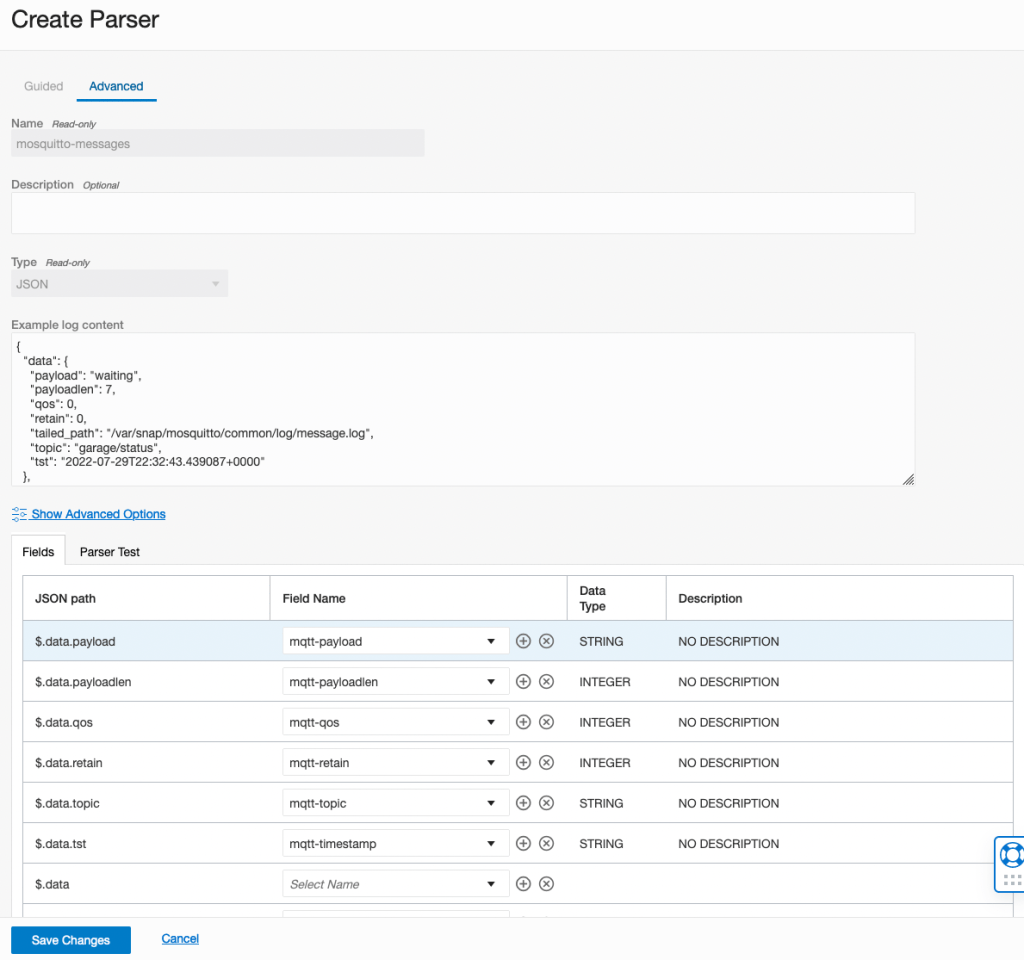

Now we need to create a parser for my MQTT log type as there is no existing parser in Logging Analytics for this log type. To create a custom parser, copy the log entry from Logging. Note the structure in the example log content field, it only needs to contain the section with “data” and the parent JSON object:

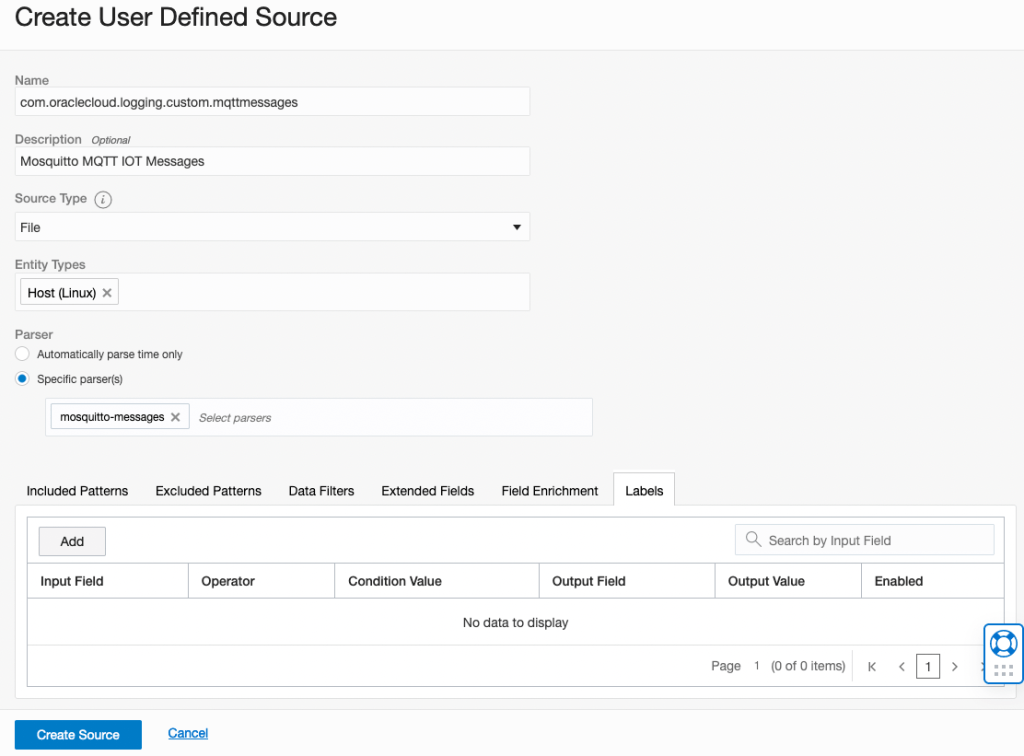

Now we need to create a source. Noting that the name of the source must also match the type field in logging e.g. “com.oraclecloud.logging.custom.mqttmessages”:

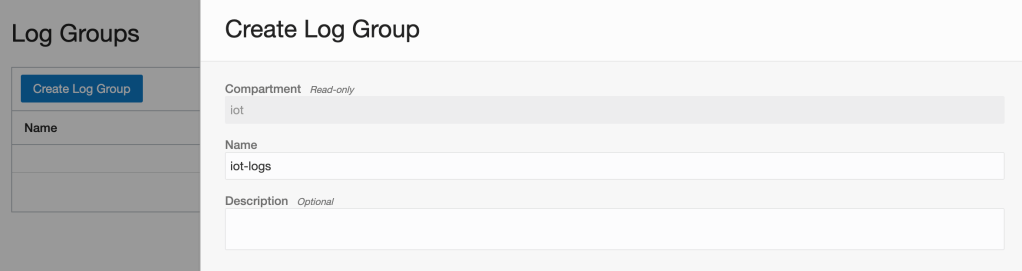

Now we need to create a Log Group in Logging Analytics:

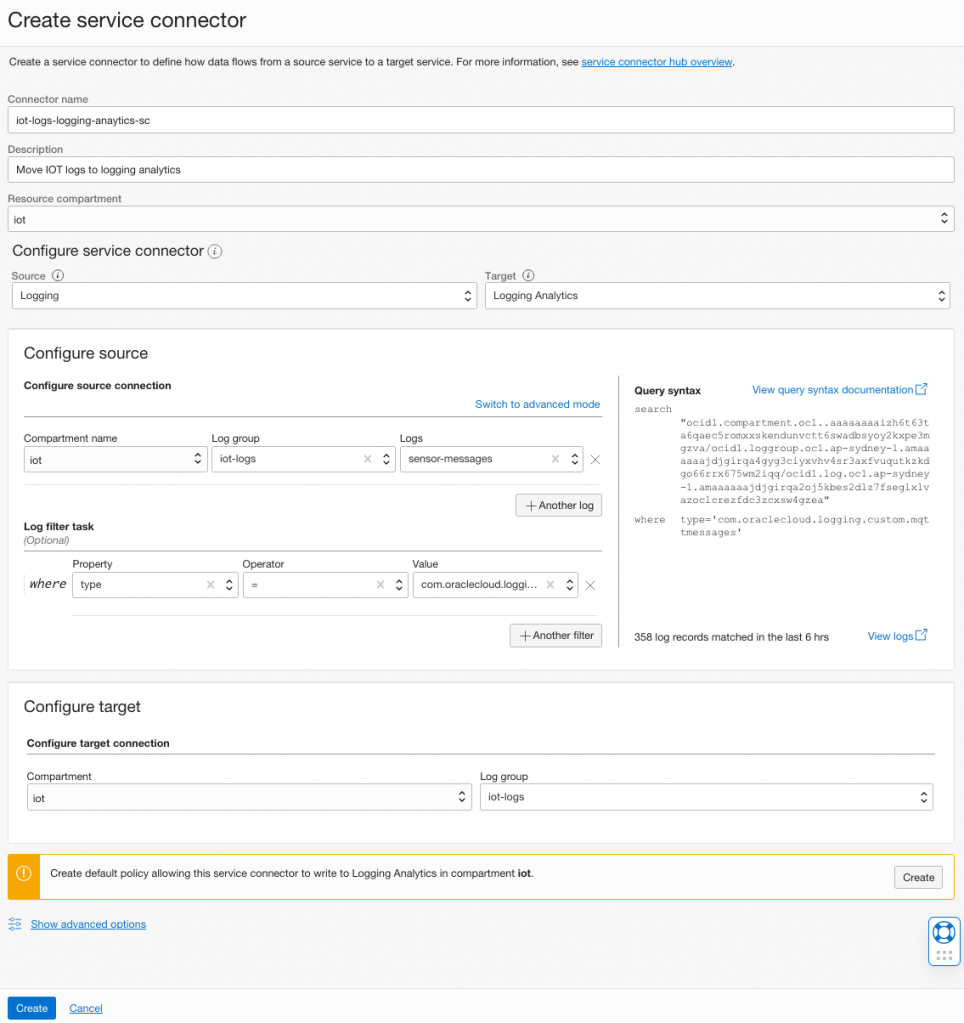

Now we need to create a service connector to move the logs between our logging and logging analytics log groups. Note that I have added the log filter “where property type = ‘com.oraclecloud.logging.custom.mqttmessages'”. If you have multiple log types that you want to move to logging analytics you can using the same connector. If you are adding additional log filters you will need to switch to advanced mode and change the “and” to “or”. This is because the form adds these as “and” conditions meaning the expression will return no results and no logs will be sent to logging analytics:

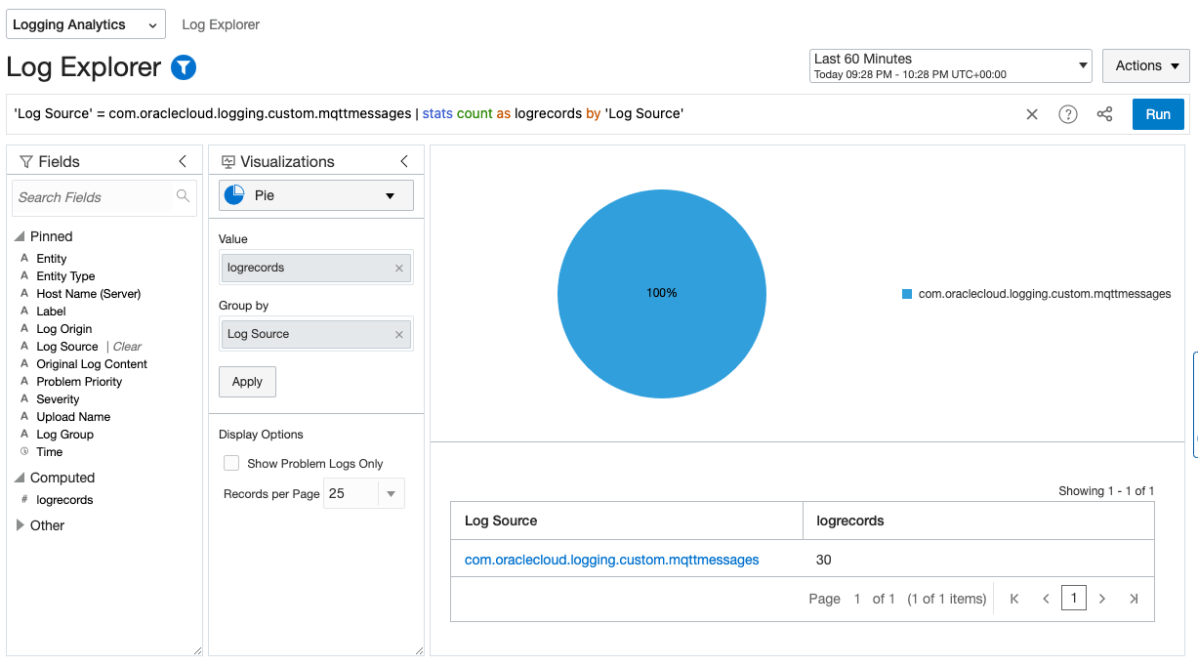

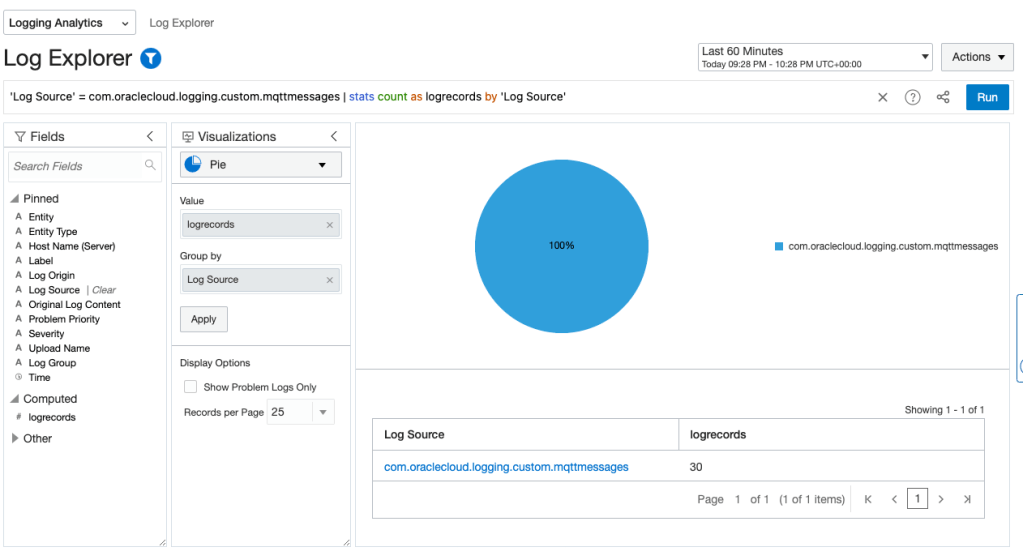

Make sure to click “Create default policy” and also create the service connector. After waiting a couple of minutes, I can see my logs and search them using the query “‘Log Source’ = com.oraclecloud.logging.custom.mqttmessages” in Logging Analytics:

In large environments logging strategies can be complex to manage. Hopefully this guide has been useful in demonstrating how you can ingest logs into Logging Analytics without requiring additional object storage buckets and event rules.