I’m sure we’ve all experienced it, either as a user, or as a system administrator. You know, that important SSL certificate everyone forgot about so didn’t renew, and now has expired?

When an SSL/TLS certificate expires it can create a number of problems, including:

- Users’ web browsers will display warning messages, indicating that the website’s connection is not secure. This can lead to a loss of trust and deter user engagement.

- API clients will often refuse to establish a connection if an SSL certificate is not valid potentially disrupting crucial data exchanges and integrations.

- Search engines may flag the site as unsafe, leading to a drop in rankings and reduced organic traffic.

Also regularly encountering certificate warnings conditions users to accept future certificate errors, which makes them more likely to accept an SSL certificate warning should they be targeted in a Man In The Middle Attack.

To avoid these issues, it’s important to have enough advance warning that a certificate is going to expire so you can obtain a new one, install, and test it thoroughly.

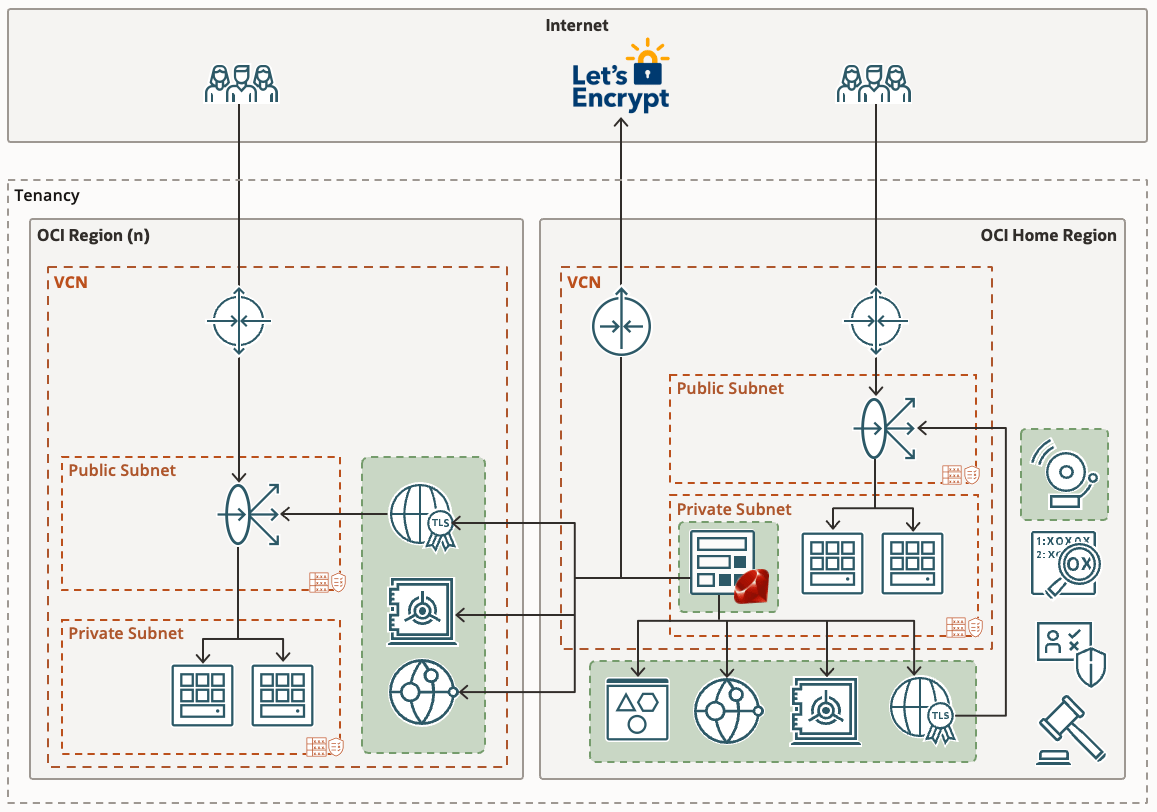

If you’re already using Domain Validated (DV) certificates, such as those issued by Let’s Encrypt you might want to consider my automated Let’s Encryption Solution. This solution automatically handles the entire certificate lifecycle using serverless functions inside OCI. For those who prefer to bring their own certificates, these can be imported into OCI’s certificate service.

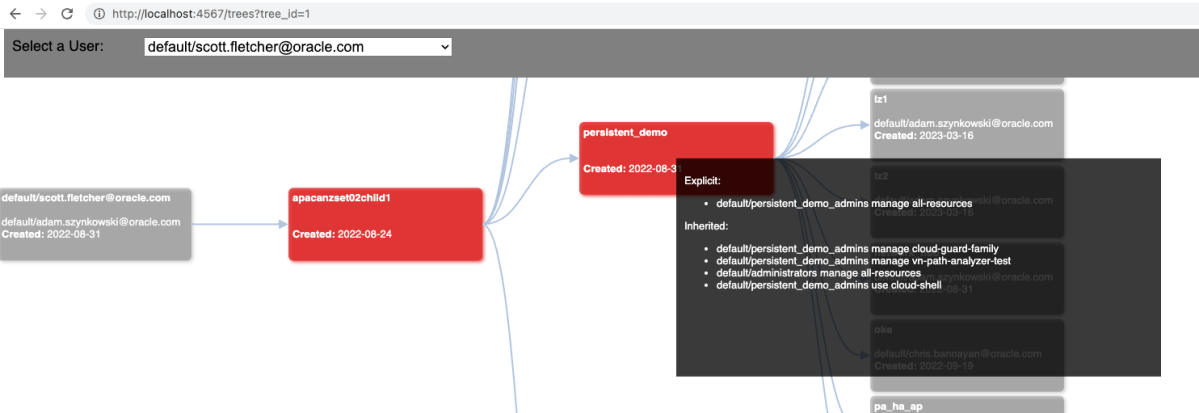

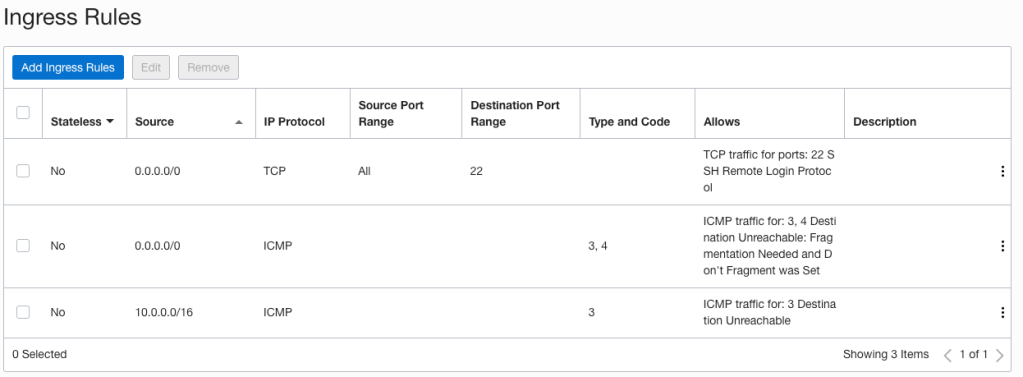

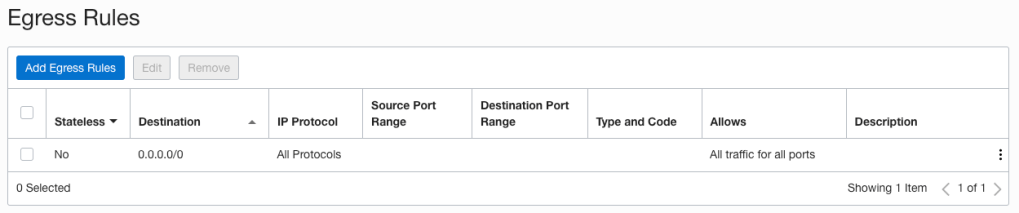

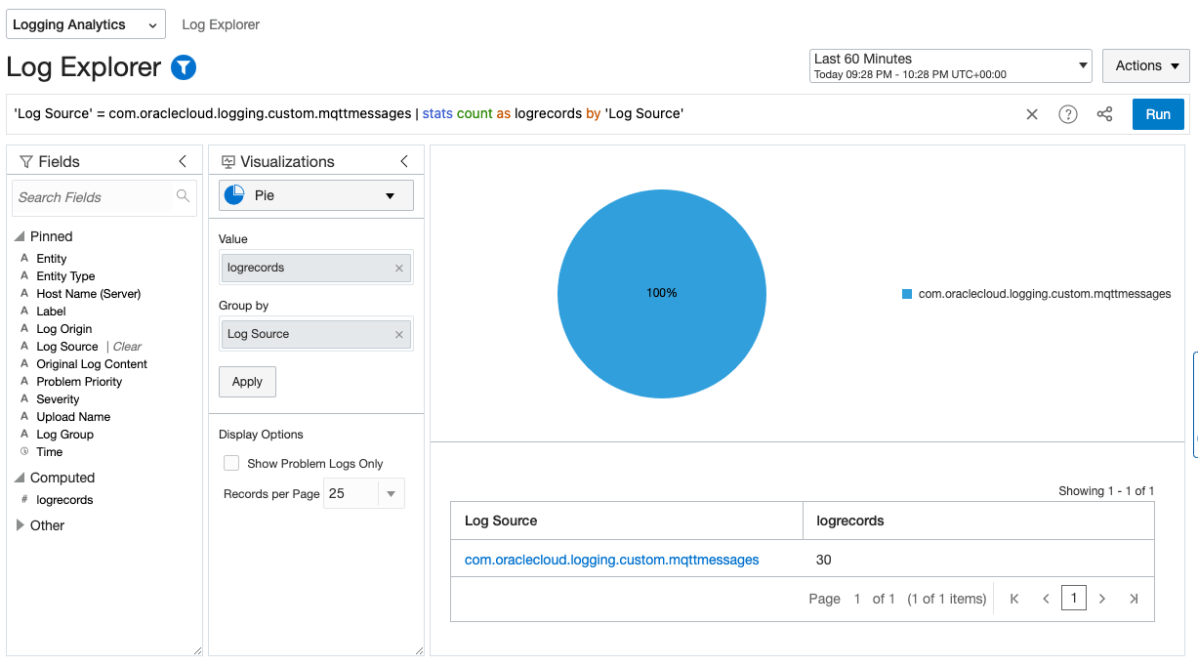

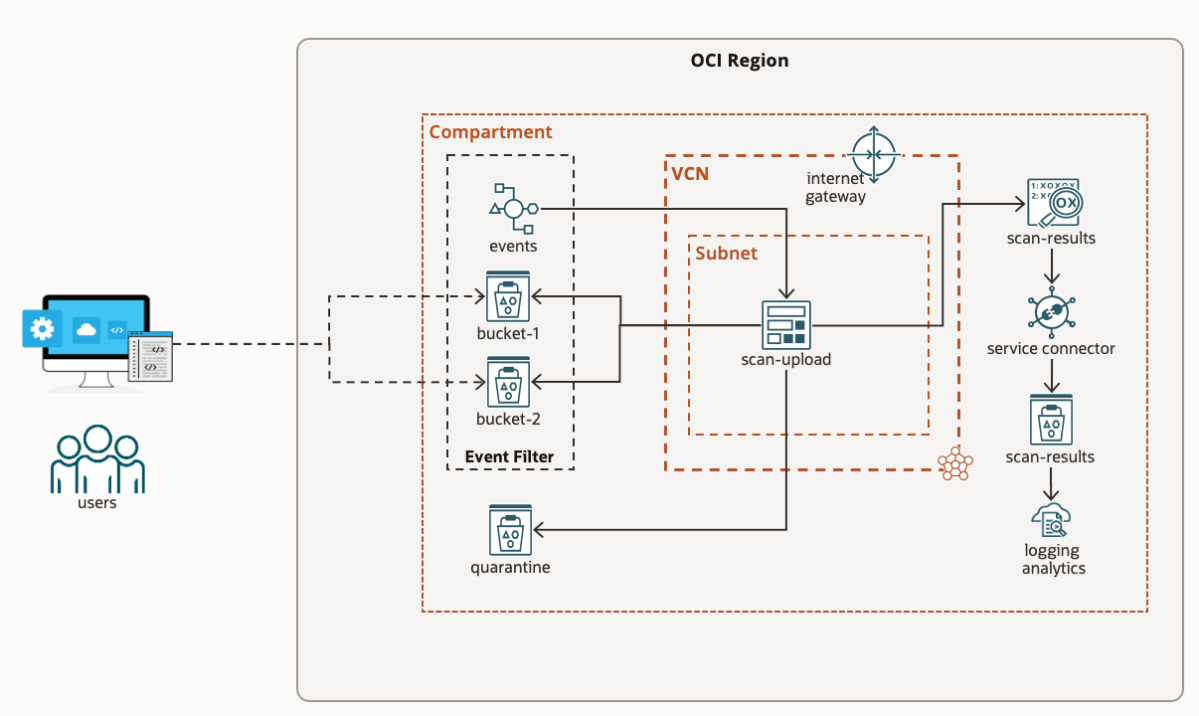

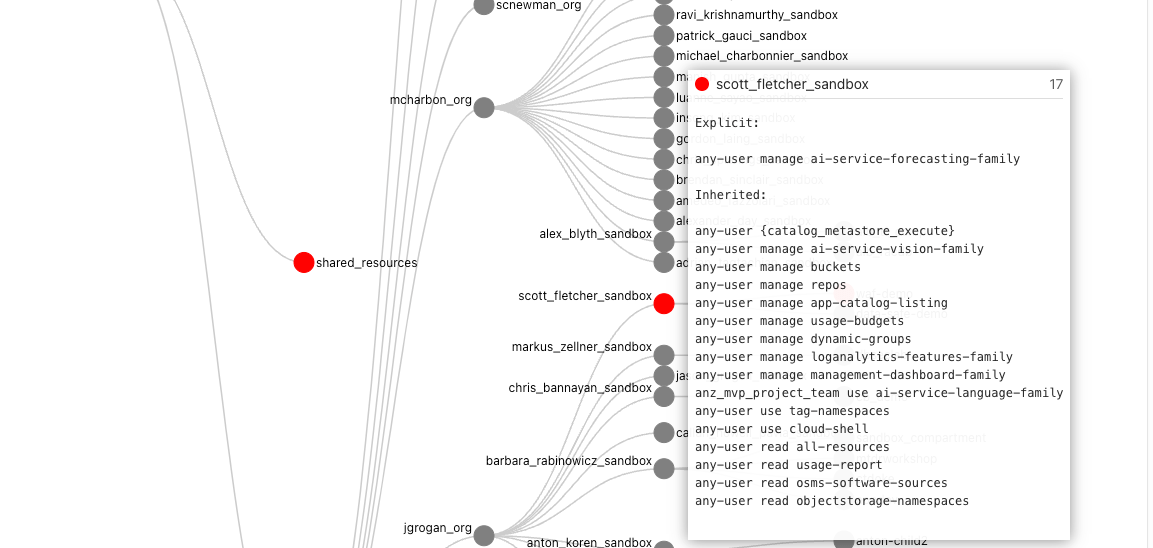

As at June 2023, certificate expiry monitoring in OCI is primarily focused on certificates associated with Load Balancers. To improve monitoring, I’ve developed a serverless solution that examines all certificates expiration dates. The solution emits logs and sends email notifications, also allowing for customisable lead time to align with your organisation’s certificate procurement process. Logs can also be forwarded to your SIEM solution if required.

Continue reading “Certificate expiry monitoring in Oracle Cloud Infrastructure”