Introduction

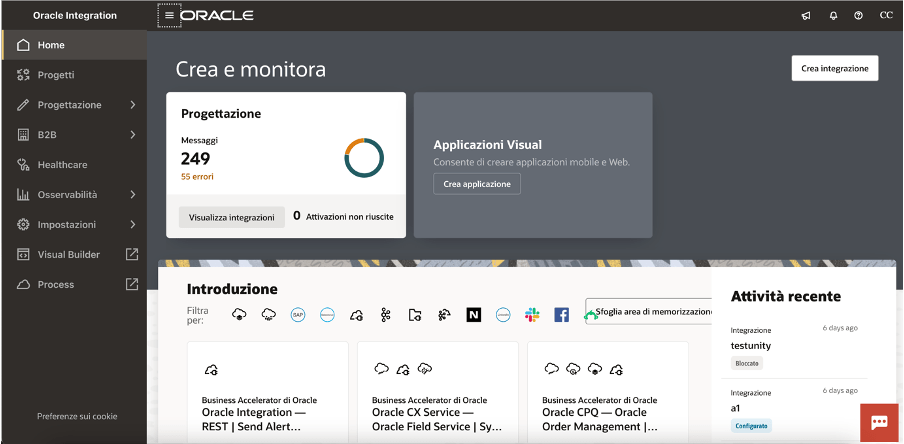

For several months now, Oracle Integration Cloud (OIC) has provided native support for the MCP protocol through an embedded MCP server. This enhancement represents a relevant step toward simplifying the development and exposure of AI applications, making easier the integration of intelligent tools and services into business processes .

Why MCP Helps in OIC

1. Standardization and Interoperability

MCP (Model Context Protocol) provides an open and structured standard that facilitates communication between applications and services, reducing integration costs and the risk of errors caused by proprietary formats.

2. Simplicity and Speed of Integration

With MCP embedded in OIC, new AI tools can be exposed as MCP services without the need for additional gateways, enabling rapid integration with existing systems.

3. Security and Governance

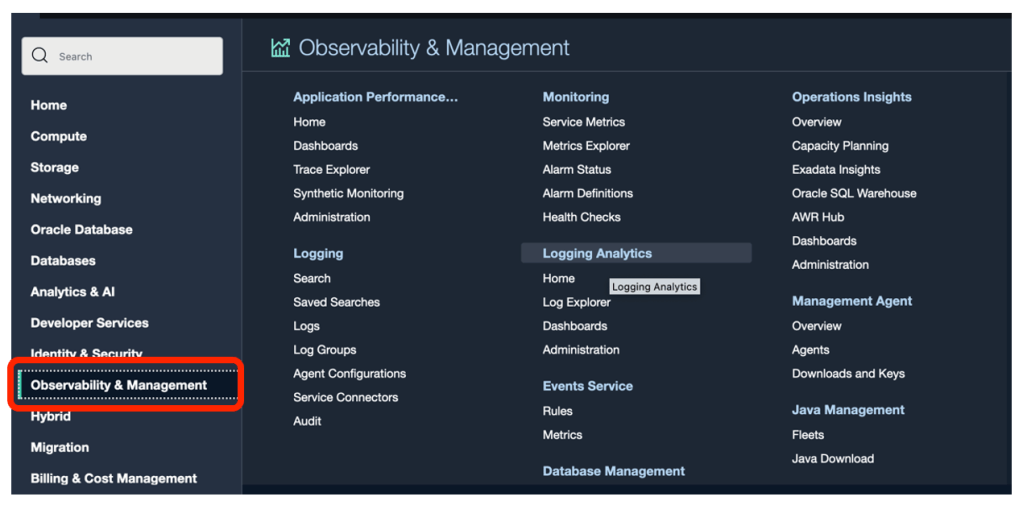

OIC provides centralized authentication, authorization, and logging capabilities, ensuring traceability and security even when implementing new AI services via MCP.

4. Scalability and Orchestrated Management

OIC enables dynamic management and scaling of MCP server instances in the cloud, adapting to growing enterprise volumes and requirements.

5. Open to the AI Ecosystem

Exposing AI tools via MCP makes it possible to easily integrate both Oracle services and third-party solutions, offering maximum flexibility in building digital processes.

The embedded MCP support in OIC enables a new integration paradigm for AI applications, simplifying governance, improving security, and accelerating time-to-value for innovative solutions across key enterprise domains such as HCM, CRM, EPM and ERP.

With MCP, OIC becomes:

- a dynamic catalog of enterprise capabilities exposed as AI-ready tools,

- an abstraction layer between LLMs and application complexity,

- a single control point for security, auditing, and governance of AI actions.

Why Exposing Tools via MCP Helps

This is the foundmental approach to decouple AI from Enterprise Systems

Without MCP:

- the LLM must understand APIs, payloads, authentication, and versioning;

- tight coupling and high maintenance costs result.

With MCP:

- the LLM invokes functional intents (“create employee,” “retrieve order,” “update opportunity”);

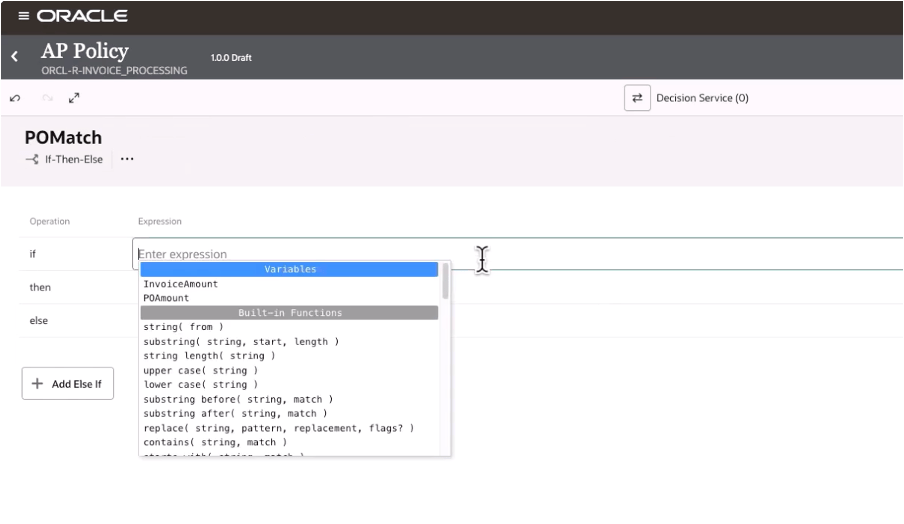

- OIC manages:

- transformations,

- validations,

- orchestrations,

- error handling.

In this way OIC integration flows and processes become reusable tools and don’t need to be rewritten for each AI agent or model. And we don’t need to forget that LLM never accesses ERP, HCM, or CRM systems directly.

3. Why MCP in OIC Is Particularly Effective

OIC has characteristics that make it an ideal MCP server:

| OIC Capability | MCP Benefit |

| SaaS Adapters (HCM, ERP, CRM) | MCP tools already connected to core systems |

| Integration monitoring | Telemetry on AI actions |

| Lookups & Packages | Semantically versioned tools |

| B2B Trading Partner Management | AI acting on external flows |

| Visual Flow & Process Automation | Tools executing complex processes |

In practice, MCP makes what OIC already does in a well AI-consumable approach.

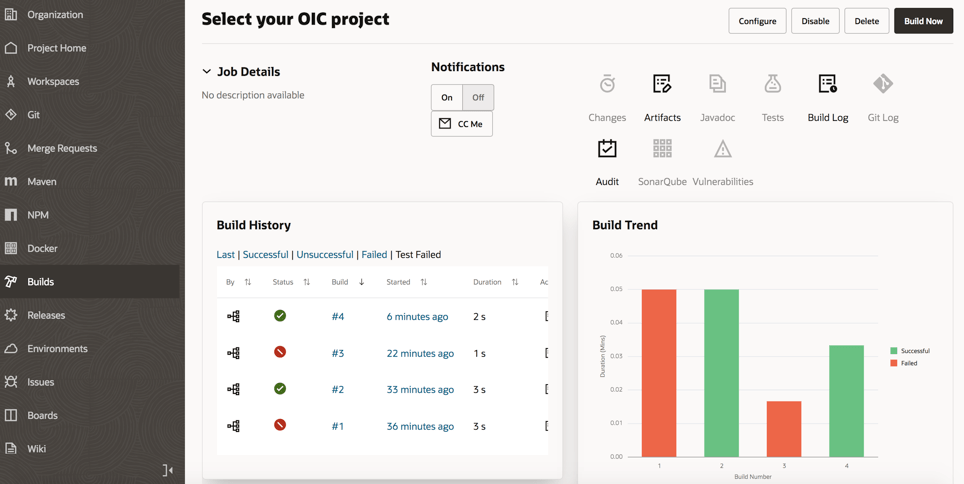

One of the most frequent questions which usually I need to answer is:

“Do you have use cases which contextualize the use of an MCP server in OIC compared to other approaches?”

This is the reason why I decided to write this article, attempting to highlight some hints that may help to position OIC also as an MCP server.

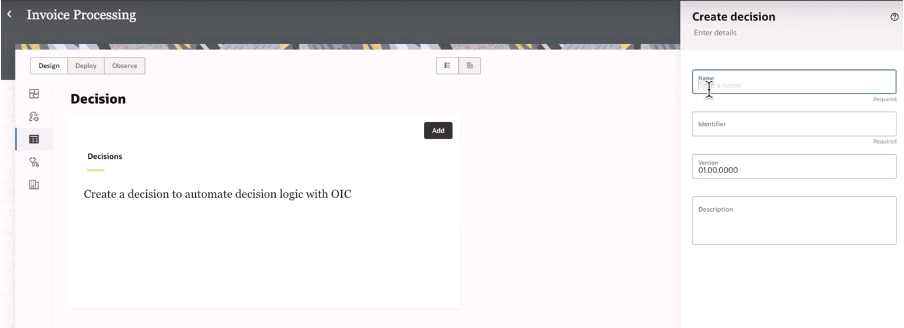

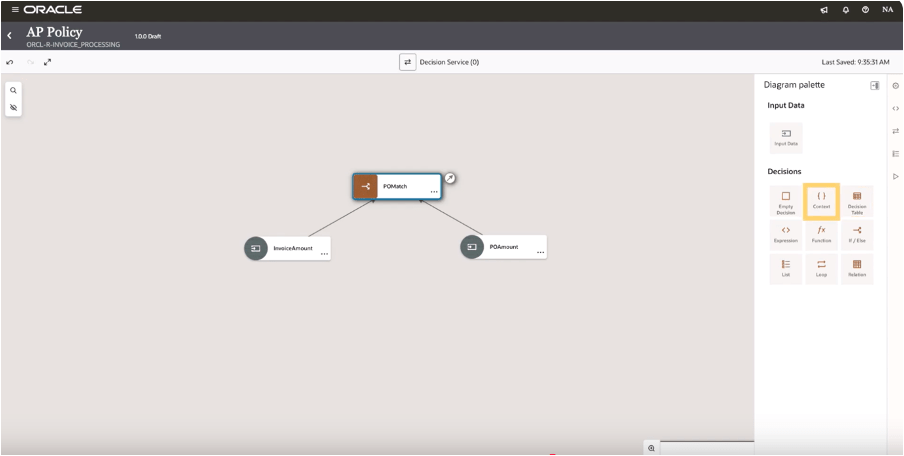

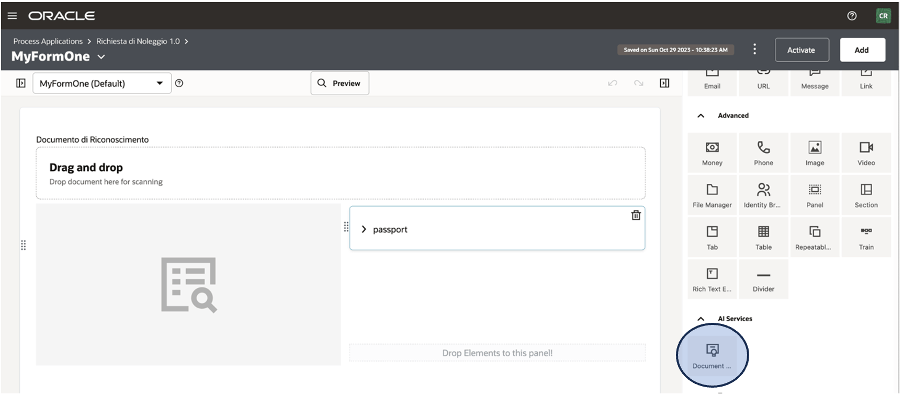

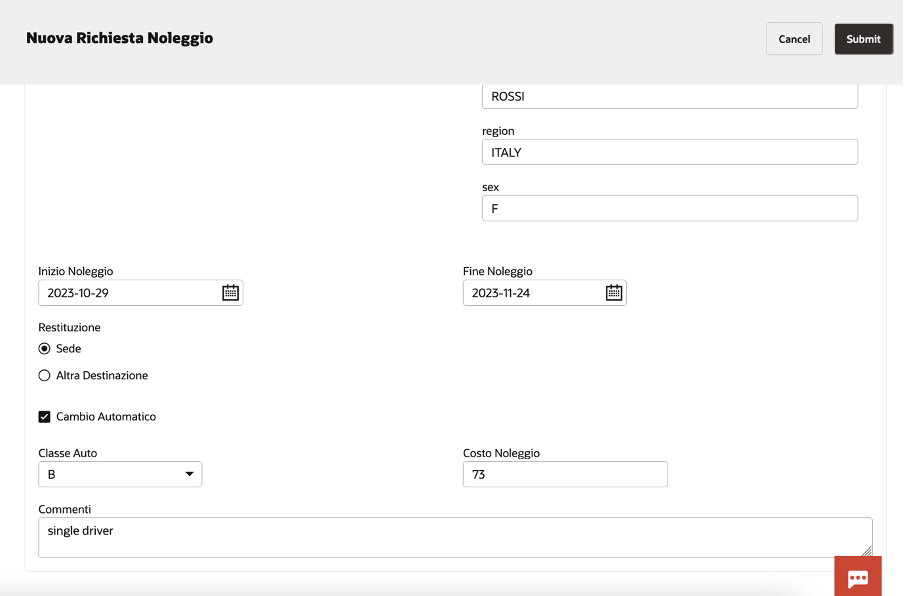

When we refer to MCP tools, in this scenario we are essentially identifying integration flows built in OIC which implement specific actions, which an AI agent — together with an LLM model (ChatGPT, Gemini, Grok, etc.) — will invoke to implement a specific use case.

Below some use cases and samples for each domain pillar

Application Examples Across Enterprise Domains

HCM – AI HR Assistant

Scenario

A conversational HR assistant supporting HR Business Partners and employees.

MCP Tools Exposed by OIC

getEmployeeProfilecreateNewHiresubmitLeaveRequestgetPayrollSummaryopenHRServiceRequest

Flow

- The user asks:

“Hire a new backend developer in Milan starting October 1st.” - The LLM:

- interprets the intent

createNewHirevia MCP. - OIC:

- validates data,

- enriches it using lookups (job code, legal entity),

- orchestrates Oracle HCM Cloud.

- Result:

- the hire is created,

- workflow tasks are triggered,

- confirmation is returned to the AI agent.

What is the value add?

- End-to-end automation,

- reduced errors,

- full HR control over what the AI is allowed to do.

CRM – AI Sales / Service Copilot

Scenario

A copilot for sales and customer service.

MCP Tools

getCustomer360createOpportunityupdateOpportunityStagecreateServiceRequestcheckOrderStatus

Example

Scneario

“Create a €250k opportunity for customer ACME based on the last order.”

- The AI:

- calls

getCustomer360,

- then

createOpportunity.

- calls

- OIC:

- retrieves data from CRM and ERP,

- normalizes it,

- applies commercial rules.

What is the added value?

- AI acting on real and enterprise data,

- complete cross-application context,

- no direct exposure of CRM systems.

EPM – AI Financial Planning & Analysis Performance Assistant

Scenario

“Simulate a 5% increase in personnel costs and tell me the impact on EBITDA.”

MCP Tools

runRollingForecastcreateScenarioSimulationgetActualVsBudgetsubmitForecastForApproval

And here what is the value add?

- AI acting like a controller,

- protection of official versions,

- fundamental integration with ERP and HCM.

ERP – AI Finance & Operations Agent

Scenario

An assistant for operations and finance.

MCP Tools

createPurchaseOrderapproveInvoicegetBudgetAvailabilityretrieveSupplierData

Example

“Create a purchase order for 50 laptops if the IT budget allows it.”

- The LLM:

- calls

getBudgetAvailability,

- if positive, invokes

createPurchaseOrder. - OIC:

- manages approvals,enforces compliance controls,

- posts transactions to Oracle ERP Cloud.

- calls

What is the value add?

- Actionable AI, not just descriptive,

- compliance with internal control rules,

- complete audit trail.

Strategic Value Summary

In summary, the strategic value achieved by combining OIC with MCP is meaningful and complements existing capabilities, enabling organizations to achieve:

- truly operational AI… not just informational but actionable

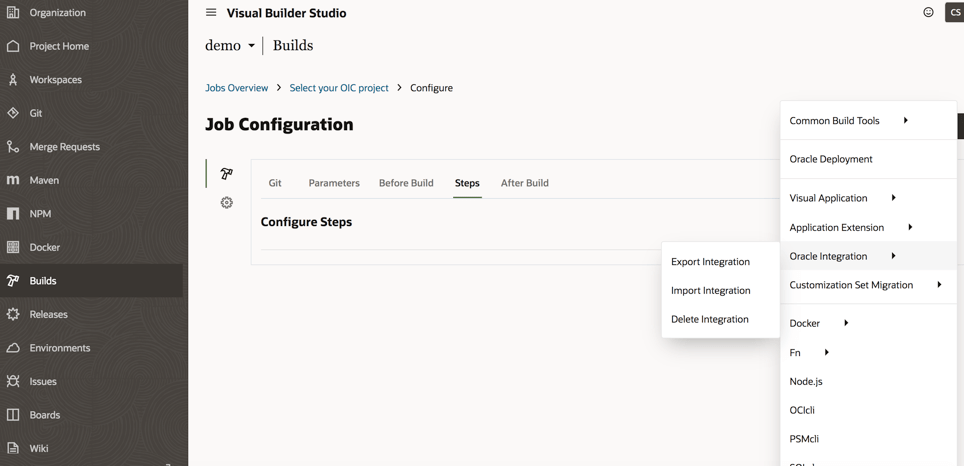

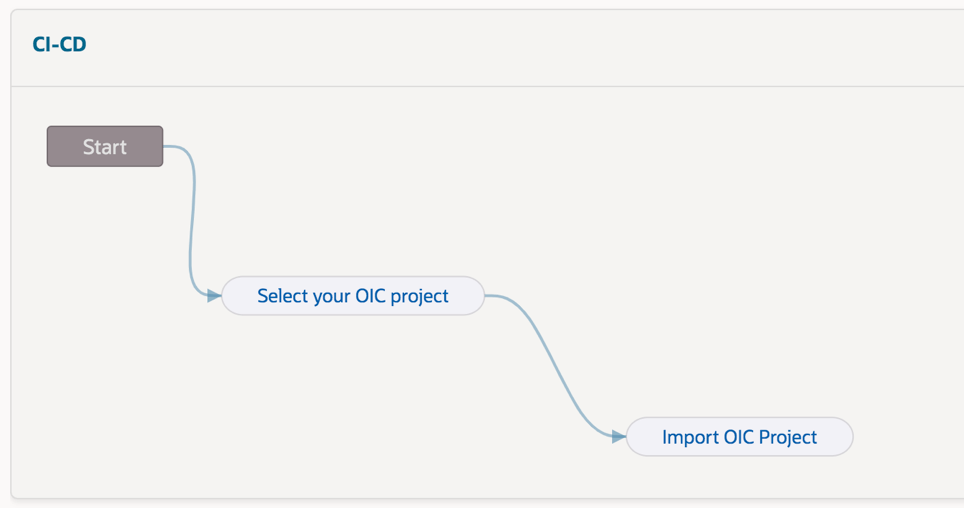

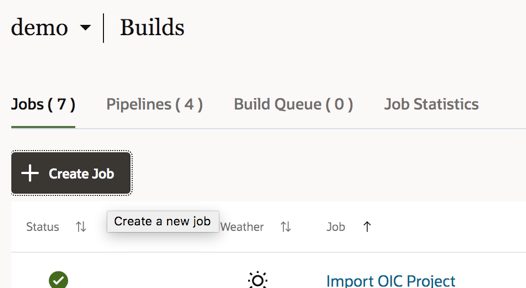

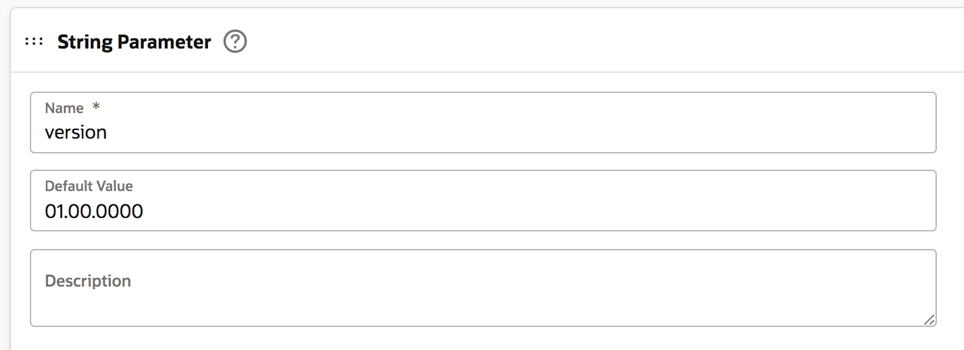

- immediate reuse of existing integrations by exposing them through the MCP standard protocol (you can do it at project level in OIC)

- decoupling between AI models and core systems,

- enterprise governance of AI actions,

- future scalability (new models, new agents, same tools).

References: