Business rules (later “decisions”) always play a crucial role in a process automation platform

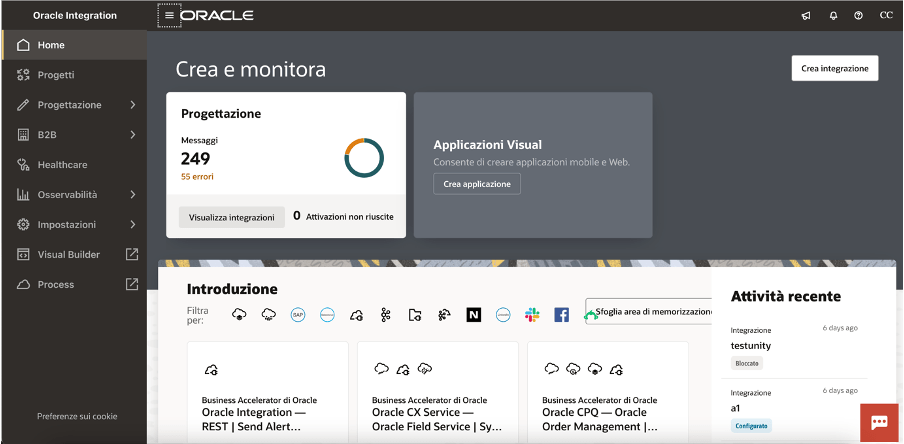

As you probably already know, Oracle Integration (OIC) is a complete business automation platform cloud based, fully Oracle managed, that enables customers to connect their applications and data, automate business processes, and innovate with AI. This is what we define a unique and complete toolkit for integrating and connecting applications and technologies

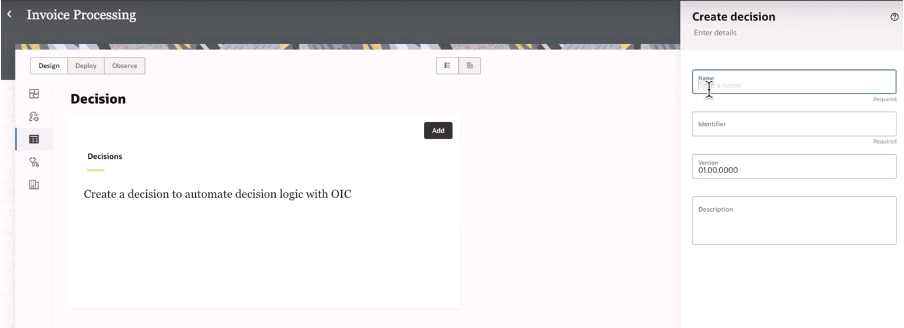

Today, as a new enhancement of this platform, we have the chance to build decision rules directly in integration projects giving you the power of the flexibility and agility in building new automation processes leveraging rules based on “if-then-else” or “decision tables” patterns

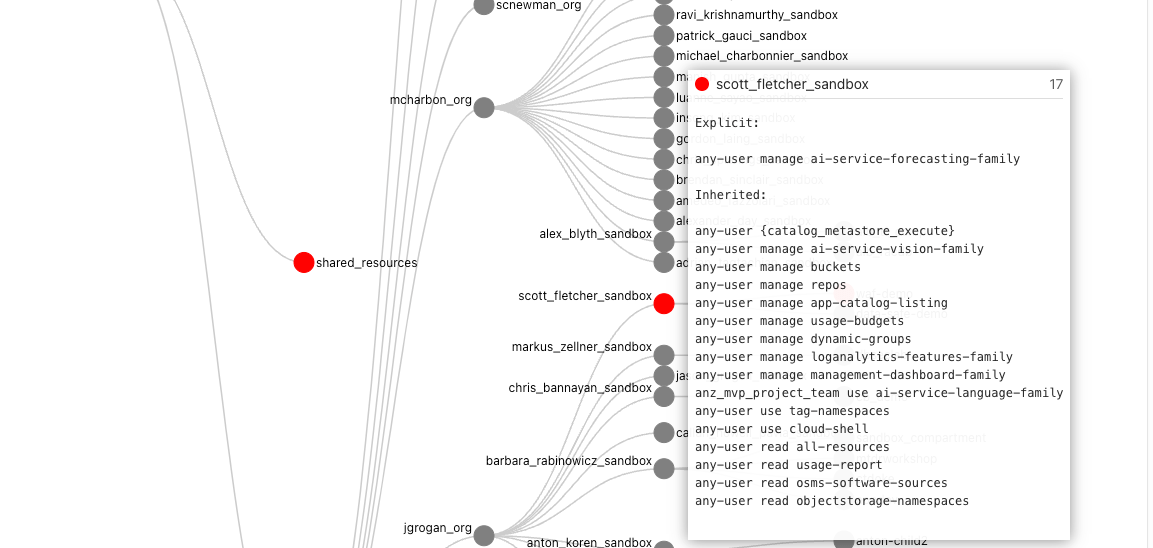

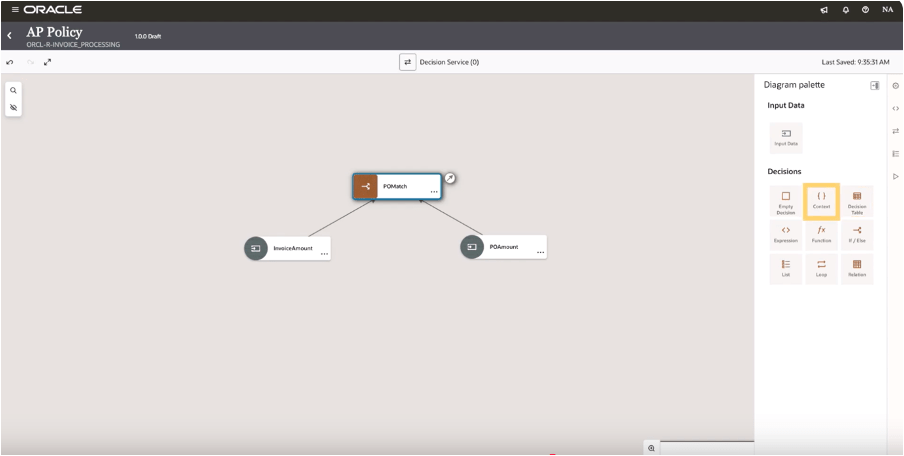

When building your integration projects in OIC, you are now able to add several components so to build your specific implementation leveraging the components you need adding to the project the functionalities like the pure integration flows with connections, robots, B2B, Healthcare and now Decisions, too

Once decisions are added to your project, you can design and later test what you have implemented to verify the correctness of the rules

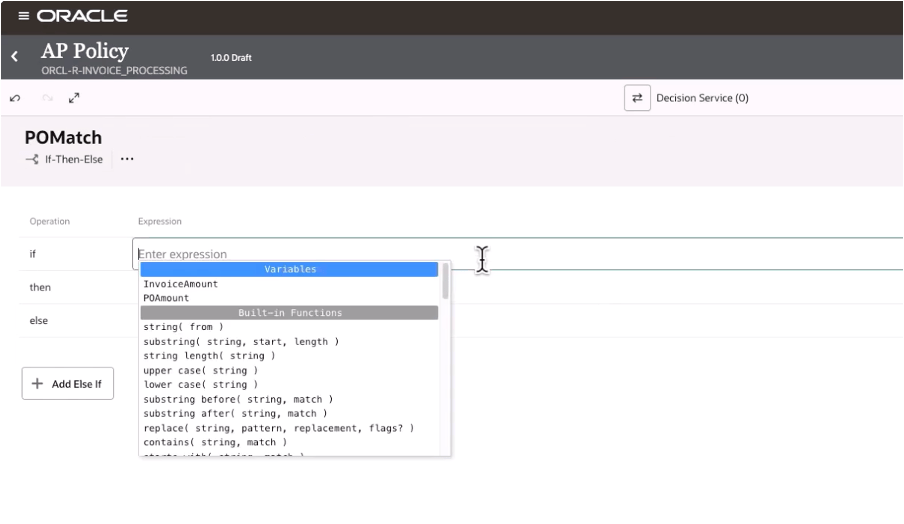

And selecting the decision type you can design your logic

In this way, decisions allow you to ensure consistency in decision-making throughout the organization. Decisions ensure that the same conditions always lead to the same outcomes, reducing the risk of errors due to subjective or inconsistent judgments. This standardization now extended to all components in OIC, and it’s particularly useful when the same decisions are made across multiple processes helping also the reuse of those rules

Decisions define a clear decision points which can be automated within a process, such as determining eligibility for a loan, assessing the risk level of a transaction, or triggering approvals. Automating these rules you can reduce manual intervention, accelerating processes, and ensuring timely and accurate outcomes.

Consider that “decisions” are typically decoupled from the process logic, meaning they can be modified independently of the core workflow. This makes easier to adjust processes when business requirements change—whether due to new regulations, market conditions, or organizational changes—without needing to re-engineer the entire process. This flexibility helps the business to remain agile and responsive.

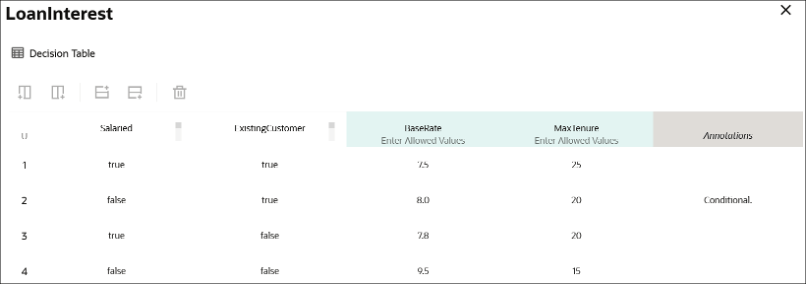

Below a sample just to consider how business rules, via a decision table, can be built leveraging a flexible and easy way to maintain the changes and using a simple and standard browser

A decision table, like that one shown before, can consist of an input expression and several input entries. It is represented as columns within a table. You can use input variables, outputs of other decisions, or built-in functions to define input expressions.

Of course, business rules (decisions) are essential for ensuring that processes are compliant with laws, regulations, and internal policies. For example, in industries such as finance, healthcare, or insurance, where compliance is critical, business rules ensure that processes adhere to the necessary legal requirements. Decisions can be updated when regulations change, ensuring ongoing compliance without the need for manual oversight.

Looking at the decisions use, we can put in evidence and summarize some advantages and especially those ones listed below.

Reusability: you can build your rules once and reuse those ones, gaining efficiency in making & maintaining them with a faster policy change approach without impacting an automation solution. Furthermore you can reuse those from within an integration flow, process workflow or any application

Efficiency: the policy maker doesn’t have to rely upon an integration specialist; he can build and maintain their own policies by themself

Fast policy change: you can change policies quickly without disrupting the areas of an automation solution that’s because you can change policy details independently from the components which use a decision

Now, stay tuned… very soon this feature will be available in GA, and not only Limited Availability, on all OIC instances

In summary:

Decisions and business rules in general enable greater flexibility, reducing operational risks, and improving both internal operations and external customer experience

References:

https://docs.oracle.com/en/cloud/paas/application-integration/