We all know that data is massively valuable to businesses, whether it is to support daily business transactional activities (Online Transaction Processing – OLTP), or to help business with planning, problem solving and decision making (Online Analytical Processing – OLAP). Either way, businesses heavily rely on both ways to support their most important strategies and activities.

Until recently, companies had to heavily invest in provisioning, securing, patching and driving either way of Online Data processing mechanisms. In most cases, even with Cloud adoption, companies still had to rely on their own skills to make sure that their databases were properly patched, secured, tuned and managed.

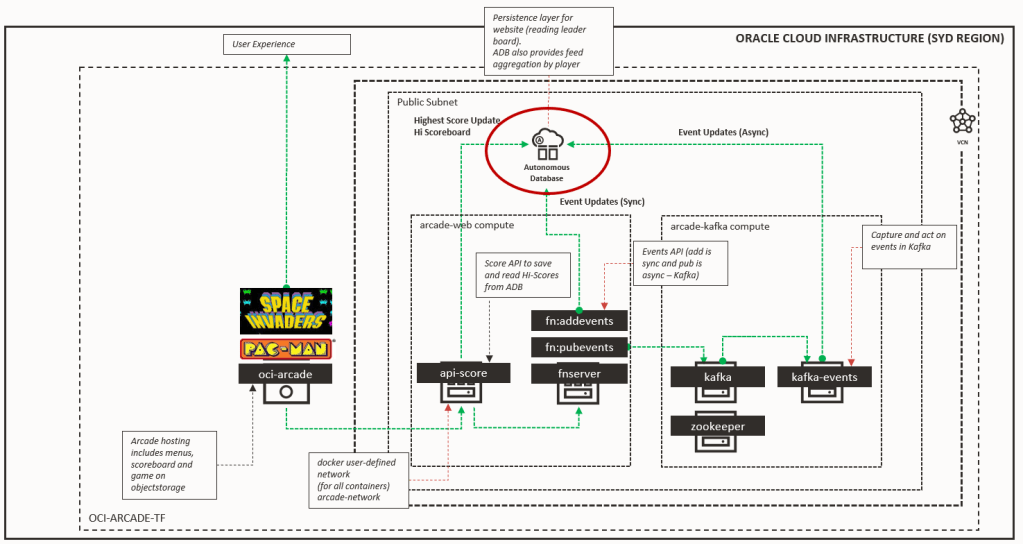

However, today there is another option with the recent announcements that Oracle have made around Autonomous Databases for both OLAP and OLTP data processing. What this means, is that Oracle has taken automation to a totally new level with the assistance of Machine Learning. The idea is that the DB itself is self-sufficient with a full set of automated activities that range from patching, securing, optimising, etc. This will reduce not only the effort to run data workloads, but removing completely human errors, creating the opportunity to not only keep the lights on, but to focus on crucial business activities around innovation and differentiation.

Continue reading “Teaching How to get started with Autonomous Database for OLTP”

In November 2018, I had the privilege to attend the Australian Oracle User Group national conference “#AUSOUG Connect” in Melbourne. My role was to have video interviews with as many of the speakers and exhibitors at the conference. Overall, 10 interviews over the course of the day, 90 mins of real footage, 34 short clips to share and plenty of hours reviewing and post-editing to capture the best parts.

In November 2018, I had the privilege to attend the Australian Oracle User Group national conference “#AUSOUG Connect” in Melbourne. My role was to have video interviews with as many of the speakers and exhibitors at the conference. Overall, 10 interviews over the course of the day, 90 mins of real footage, 34 short clips to share and plenty of hours reviewing and post-editing to capture the best parts.