This is my 12th #DaysOfArm article that tracks some of the experiences that I’ve had so far. And just to recap from the first post (here) on June 12 2021.

It’s been just over 2 weeks since the launch of Ampere Arm deployed in Oracle Cloud Infrastructure (OCI). Check this article out to learn more (here). And it’s been about one week since I started looking into the new architecture and deployment, since I started provisioning the VM.Standard.A1.Flex Compute Shape on OCI and since I started migrating a specific application that has many different variations to it to test it all out.

This is my next learning where I’ve deployed successfully Pelias – an open-source geocode API all deployed on an 4 OCPU with 24 GB of RAM in an Always Free Tier tenancy.

(Update – 11th Oct 2021 – there’s been some changes made as this is a working document … as some of the packages have changed as well as additional fixes to make it easier …)

(Update – 28th Dec 2022 – I’ve refreshed the instructions for this blog post to match what is happening with Pelias as there’s been some cool changes to support arm64).

Over the past couple of weeks, I’ve been working in the background with some open-source projects and one specifically is in the space of geocoding – Pelias (here).

There is a reason why I spent a little more time about this project than others.

There are plenty of existing geocoding solutions and APIs out there. What I found was that of the ones that I researched, they have different terms and conditions that limit their use :- as either a trial; requires a paid account; or restricts the use of the geocode API with their other services including maps or routes.

I wanted to research and create something that aligned with the purpose of Oracle Cloud Always Free Tier.

Here is a high-level detail of how to get this going on OCI.

1. Provision Compute / Network

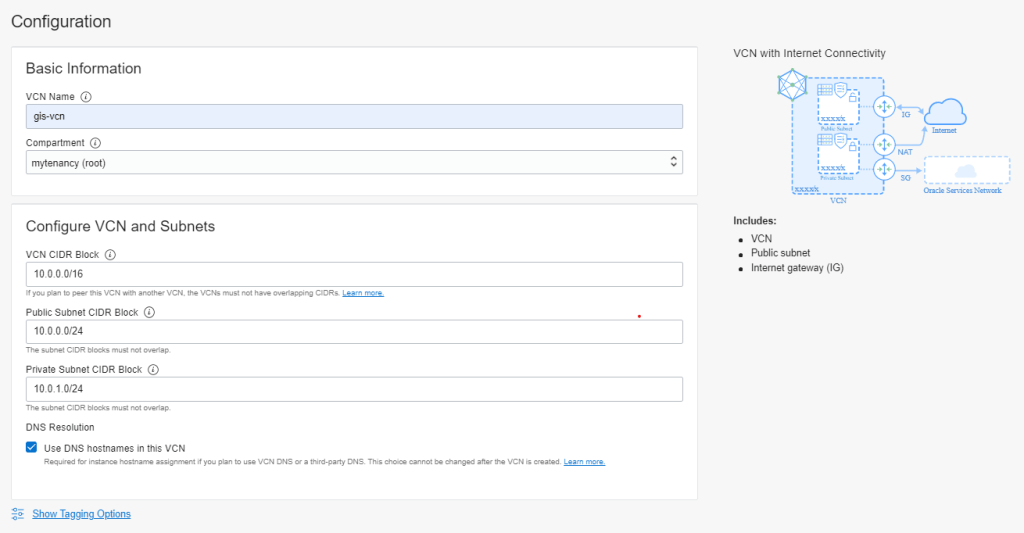

I provisioned a Virtual Cloud Network (VCN) using the VCN Wizard (noting that there is the service limit of 1 VCN in Always Free Tier).

Note: If you want this service available outside of the network, then add egress rule for destination port of 4000/TCP.

I provisioned a Compute instance with the following configuration.

- VM.Standard.A1.Flex Compute Shape

- 4 OCPUs with 24 GB of RAM (which is the Always Free Tier service limit)

- ~150GB for the block storage for the book volume

- Oracle Linux 8 (with the latest update)

If you want more assistance, there is a tutorial that you can follow (here).

2. Install Software Requirements

Once you have provisioned and connected to the instance using either ssh or putty, here is a brief list of commands to run as root.

dd iflag=direct if=/dev/sda of=/dev/null count=1

echo "1" | sudo tee /sys/class/block/sda/device/rescan

cd /usr/libexec

./oci-growfs

yum update -y && yum install -y git python3-devel

python3 -m pip install --upgrade pip

dnf config-manager --add-repo=https://download.docker.com/linux/centos/docker-ce.repo

yum install -y docker-ce

service docker start

systemctl enable docker

useradd pelias

usermod -a -G docker pelias

mkdir -p /data/pelias

chown pelias:pelias -R /data

firewall-cmd --add-port 4000/tcp --permanent --zone=public

firewall-cmd --add-port 443/tcp --permanent --zone=public

firewall-cmd --add-port 80/tcp --permanent --zone=public

firewall-cmd --reloadIn summary, this is what this does.

- Expands the default boot volume size to the ~150 GB that has been provisioned.

- Updates the packages and installs git and additional python3 packages.

- Upgrades pip (python installer).

- Install docker (community edition) repository and then docker itself.

- Configure docker as a service and start docker.

- Create a user called pelias and add the docker group to that user.

- Create a /data directory and change the ownership of /data to pelias.

- Update the firewall for pelias.

(Update 28th Dec 2022 – I tried this with podman to see what would happen however I wasn’t able to build it properly “yet”. I’ve continued with docker-ce.)

3. Build Images (for github)

Once this has been executed as root, then the following can be executed as pelias (the application user). This will build the docker images and store them locally. You can find the documentation for Pelias using docker (here).

(Update: 11th Oct 2021 – there were changes that were included to update pbf2json to be built for arm64 within the docker image as well as updating the scripts to refer to this version)

(Update: 28th Dec 2022 – the pbf2json has been built for arm64 – YAY !!! and has been included into the npm packages. this has simplified the interpolation and prepare phase to import the data)

(Update: 11th Oct 2021 – included a version of the elasticsearch 7.9.3 that also fixed an issue related to the user permissions accessing the bundled jdk)

(Update: 28th Dec 2022 – reverted to the standard docker project to check if the updated version of elasticsearch is sufficient. It’s been upgraded to use 7.16.1 which is multi-arch and supports arm64)

(Update: 11th Oct 2021 – updated references to golang to be arm64)

mkdir repos

cd repos

git clone https://github.com/jlowe000/docker-baseimage && cd docker-baseimage && docker build -t pelias/baseimage . && cd ..

git clone https://github.com/jlowe000/docker-libpostal_baseimage && cd docker-libpostal_baseimage && docker build -t pelias/libpostal_baseimage . && cd ..

git clone https://github.com/jlowe000/libpostal-service && cd libpostal-service && docker build -t pelias/libpostal-service . && cd ..

git clone https://github.com/pelias/schema && cd schema && docker build -t pelias/schema:master . && cd ..

git clone https://github.com/jlowe000/api && cd api && docker build -t pelias/api:master . && cd ..

git clone https://github.com/pelias/placeholder && cd placeholder && docker build -t pelias/placeholder:master . && cd ..

git clone https://github.com/pelias/whosonfirst && cd whosonfirst && docker build -t pelias/whosonfirst:master . && cd ..

git clone https://github.com/pelias/openstreetmap && cd openstreetmap && docker build -t pelias/openstreetmap:master . && cd ..

git clone https://github.com/pelias/openaddresses && cd openaddresses && docker build -t pelias/openaddresses:master . && cd ..

git clone https://github.com/pelias/geonames && cd geonames && docker build -t pelias/geonames:master . && cd ..

git clone https://github.com/pelias/csv-importer && cd csv-importer && docker build -t pelias/csv-importer:master . && cd ..

git clone https://github.com/pelias/transit && cd transit && docker build -t pelias/transit:master . && cd ..

git clone https://github.com/jlowe000/polylines && cd polylines && docker build -t pelias/polylines:master . && cd ..

git clone https://github.com/jlowe000/interpolation && cd interpolation && docker build -t pelias/interpolation:master . && cd ..

git clone https://github.com/pelias/pip-service && cd pip-service && docker build -t pelias/pip-service:master . && cd ..

git clone https://github.com/pelias/fuzzy-tester && cd fuzzy-tester && docker build -t pelias/fuzzy-tester:master . && cd ..Note: I’ve had to make a set of changes (that I’ve included in forked repositories specific to the arm64). This is to specifically cater for some components into the libpostal that needed to be ported to arm64 and needed changes to the docker build to work on arm64.

(Update I’m currently investigating the interpolation service which may result in another forked repository.)

(Update 11th Oct 2021 – interpolation service has been fixed and has been checked in)

(Update 28th Dec 2022 – there were some changes to the different images so I sync’ed the fork to bring in the changes from the pelias repos. There’s also been some small changes in the npm dependencies specifically the sqlite3 node package that required a build).

4. Start Importing Datasets

As per the documentation, there are a few setup tasks required.

4.1 Setup the import environment

This is once-off setup to install the command and import tools.

(Updated 11th Oct 2021 – Updated to include a forked version of the docker images to include the necessary elasticsearch version as well as fixing a user permission issue related accessing the bundled jdk)

# install docker-compose (required by pelias command)

python3 -m pip install --user docker-compose

# clone this repository

cd /home/pelias/repos

git clone https://github.com/jlowe000/docker.git && cd docker

# install pelias script

# this is the _only_ setup command that should require `sudo`

ln -s "$(pwd)/pelias" /home/pelias/.local/bin/pelias4.2 Setup the project

There are different projects available that have pre-defined locations and regions. The sample below is Portland and is a small dataset that is good for a test. There are some things that need to be setup for the project before running the import process.

(Updated 14th Oct 2012 – I’ve updated the repository so it already refers to elasticsearch 7.9.3 which is a multi-arch version supporting arm64 and x86)

# cd into the project directory

cd /home/pelias/repos/docker/projects/portland-metro

# create a directory to store Pelias data files

# see: https://github.com/pelias/docker#variable-data_dir

# note: use 'gsed' instead of 'sed' on a Mac

mkdir /data/pelias/portland-metro

sed -i '/DATA_DIR/d' .env

echo 'DATA_DIR=/data/pelias/portland-metro' >> .envIn the project directory, there is a hidden file .env that is used by the tools. The data directory needs to be created and updated in this file to match before running the scripts.

4.3 Start the Elasticsearch cluster

Elasticsearch is a main component of the Pelias architecture. You can get a view of the solution architecture (here). Before starting the import process, an Elasticsearch cluster needs to be started.

(Updated 28th Dec 2022 – I’ve updated the elasticsearch version to match the docker compose images – the elasticsearch docker hub images are still amd64 only so needing to build it from the elasticsearch repo)

cd /home/pelias/repos/docker/images/elasticsearch/7.16.1

# build local docker image

docker build -t pelias/elasticsearch:7.16.1 .Elasticsearch can then be started and the index for the cluster created (which is executed once off).

# start elasticsearch

pelias elastic start

pelias elastic wait

pelias elastic createI also found early on, that there were some issues about the importing that stopped. It related to a cluster_block_exception and there were too many changes to the indices that the cluster can handle. Typically, once I saw this issue in the output, it was not recoverable. To resolve this issue, the following curl commands can be executed to remove these constraints. May be worth reapplying later on.

curl -XPUT -H "Content-Type: application/json" http://localhost:9200/_cluster/settings -d '{ "transient": { "cluster.routing.allocation.disk.threshold_enabled": false } }'

curl -XPUT -H "Content-Type: application/json" http://localhost:9200/_all/_settings -d '{"index.blocks.read_only_allow_delete": null}'4.4 Load Dataset

pelias download all

pelias prepare all

pelias import all(Update 11th Oct 2021 – These commands do take time. So you may want to run these in background.)

This will download the different datasets used for the geocoding for that region. There are different sources that are converted, interpolated and then imported in sqlite database and elasticsearch.

4.4 Startup all the services

The data is loaded and the services can be started. Once it is up and running then other projects can be loaded in and the data will be presented by these services.

pelias compose up5. Test the APIs

From here, we can test it out … The documentation (here) is a good place to start. The easiest one to try is the search API where you can find the documentation (here). And to get a better understanding of the response, then check out the documentation (here).

To interactively learn more about the APIs, you can try out the Open Route Service API Playgound (here).

It is now ready !!!

There are a couple of things that I’m continuing to work on.

- Valhalla (which is used to turn large Open Street Maps to polylines) has not been ported to arm64. I’m trying to get this going but there are segmentation faults that I’m working through. (Update 11th Oct 2021 – there are alternate method of getting the polylines. I’ll update this soon.)

- There are some data quality import logging that for when I tried to import North America, I ran out of disk. I had to remove the error and skip output file references from the

pelias/interpolation/scripts/conform_oa.shscript. (Update 11th Oct 2021 – this has been fixed) - There are some references in the

pelias/interpolationrepository that refers tox64version of thepbf2jsonbinaries. These can be replaced with the availablearmversion. (Update 11th Oct 2021 – this has been fixed)

This would not be possible for the countless people and organisations that contribute to these projects. Here is a list of them based upon the specific projects that I’ve forked or used. If I’ve missed someone, please let me know.

- The team from Pelias (here).

- Peter Johnson “missinglink” (here).

- Julian Simioni “orangejulius” (here).

- Isaac Z. Schlueter (here).

- The team from Elasticsearch (here).

- The team from Valhalla (here).

- The team from OpenAddresses (here) where some of the data is sourced.

- The team from Who’s On First (here) where some of the data is sourced.

- The team from OpenStreetMap (here) where some of the data is sourced.

- The team from Geonames (here) where some of the data is sourced.

- The team from Geofabrik (here) where some of the data is sourced.

- The team from Geocode Earth (here) where some of the data is sourced.

It is important to attribute everyone in this process.

This is not the end as I will work on creating the automation scripts to make this easier to do as well on Oracle Cloud Infrastructure Always Free Tier.

If you want to try this out yourself or work on your own application, sign-up (here) for the free Oracle Cloud Trial. I’d be interested to hear your experiences and learn from others as well. Leave a comment or contact me at jason.lowe@oracle.com if you want to collaborate.

There’s plenty of work to make this more achievable for everyone. And hence sharing this knowledge is the reason why I’m writing this series – #XDaysOfArm. I’ll keep documenting as long as I keep learning.

(Update 27th Dec 2022 – I’ve taken the time to update the dependencies and re-baseline from the pelias repositories and update any changes required.)

I’ve started rebuilding this and there’s been some changes to the original repos that have helped the process. I’ll document my findings when I’m done (hopefully).

LikeLike