Over the past couple of years, we’ve posted about the OCI Arcade. You can find the original article (here) and the repository (here). As part of the revamp, many things have changed and as such we’ve spent a little bit of time to make it better. Check out some of these new additions.

Continue reading “OCI Arcade Gets A Revamp”Category: NodeJS

#BuildWithAI 2021 – Another Step

Last weekend (from Friday 29th Oct to Tuesday 2nd Nov), was the #BuildWithAI Hackathon 2021 where participants, mentors, sponsors and organisers gathered together to solve real world challenges with AI. This event does not standalone. In a world full of change, this (from my perspective) started last year in the #BuildWithAI Hackathon 2020 and continued to build.

This article is about the event but the event itself is just “Another Step”.

Continue reading “#BuildWithAI 2021 – Another Step”#DaysOfArm (13 of X)

This is my 13th #DaysOfArm article that tracks some of the experiences that I’ve had so far. And just to recap from the first post (here) on June 12 2021.

It’s been just over 2 weeks since the launch of Ampere Arm deployed in Oracle Cloud Infrastructure (OCI). Check this article out to learn more (here). And it’s been about one week since I started looking into the new architecture and deployment, since I started provisioning the VM.Standard.A1.Flex Compute Shape on OCI and since I started migrating a specific application that has many different variations to it to test it all out.

This is my next learning is another retrospective with the OCI Arcade deployment the full stack is now being deployed on 1 OCPU with 6 GB of RAM in an Always Free Tier tenancy.

Continue reading “#DaysOfArm (13 of X)”Get OCI Arcade Free on Arm

There’s been numerous announcements about Oracle Cloud Infrastructure (OCI) adding Arm-based Compute to the list of Virtual Machine (VM) Shapes. Check some of the announcements (here) and (here).

You can also watch it (here) too with Clay Magouyrk, Executive Vice President, Oracle Cloud Infrastructure. Note: The link above has more content and videos.

Have you seen the OCI Arcade? We have built the architecture deployable on OCI Always Free Tier.

Recently in the OCI Always Free Tier, an additional services has been added to include 4 cores and 24 GB of RAM of Ampere A1 Compute. With this additional capacity, it made sense for OCI Arcade to be ported to this A1 Compute Shape. Here is what we did and why.

Continue reading “Get OCI Arcade Free on Arm”Welcome To The OCI Arcade

Each of us will read this from our own perspective. Equally diverse are the outcomes and the actions that you might want to take away from this. So, I ask you: Be open. Find the opportunity. And execute.

This is something that we’ve built for the purposes of an infrastructure demonstration of Oracle Cloud Infrastructure (OCI). The code is available in an open public github repository and we’ve written articles on specific capabilities. We are open to collaborate in building more scenarios which allows this demonstration to scale.

Continue reading “Welcome To The OCI Arcade”We welcome you to the OCI Arcade

Serverless on Always Free Tier with fnproject

I’ve started posting articles related to the project that @stantanev and a few of us are working on. This is snapshot of the puzzle that is to build out a APIs on the Oracle Always Free Tier.

As a demonstration of capability, we built a few different APIs using fnproject (https://fnproject.io/) – an open-source container-native serverless platform. As part of Oracle Cloud Infrastructure, there’s Oracle Functions which is the managed Function-as-a-Service based upon this same project.

Let’s take a look at it here and see what it took to get going. Also, this is being deployed into VM.Standard.E2.1.Micro compute shapes (which is 1 OCPU and 1GB of memory) and hence there are some considerations to make sure we get the most out of the kit we have access to (for free).

Continue reading “Serverless on Always Free Tier with fnproject”Teaching How to Get Started with Oracle Container Engine for Kubernetes (OKE)

In a previous blog, I explained how to provision a Kubernetes cluster locally on your laptop (either as a single node with minikube or a multi-node using VirtualBox), as well as remotely in the Oracle Public Cloud IaaS. In this blog, I am going to show you how to get started with Oracle Container Engine for Kubernetes (OKE). OKE is a fully-managed, scalable, and highly available service that you can use to deploy your containerized applications to the cloud on Kubernetes.

Continue reading “Teaching How to Get Started with Oracle Container Engine for Kubernetes (OKE)”Teaching How to Invoke Gen2 Oracle Cloud Infrastructure (OCI) resources via REST APIs

I am thrilled with the Oracle’s Gen2 Cloud Infrastructure architecture, where Oracle completely separates the Cloud Control Computers from the User Code, so that no threats can enter from outside the cloud and no threats can spread from within tenants.

Obviously with more security, there comes more coordination, especially at the moment of invoking OCI resources APIs. Luckily, Oracle did a good job at providing a simple to use CLI and SDK (see here for more information).

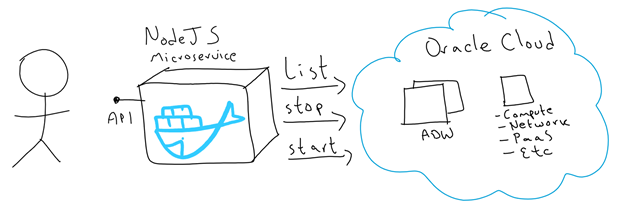

For the purpose of this blog, I built a simple NodeJS application that helps demystify the security aspect of invoking OCI APIs. Check this link for examples of running similar code across other Programming Languages.

My NodeJS application manages OCI resources in order to:

- List ADW instances

- Stop an ADW instance

- Start an ADW instance

I started this NodeJS application to list, start and stop ADW resources. However, I designed this application to easily extend it to invoke any other type of OCI resources.

I containerised this application with Docker, to make it easier to ship and run.

This is a picture of the moving parts:

Teaching How to Get started with Kubernetes deploying a Hello World App

In a previous blog, I explained how to provision a new Kubernetes environment locally on physical or virtual machines, as well as remotely in the Oracle Public Cloud. In this workshop, I am going to show how to get started by deploying and running a Hello World NodeJS application into it.

There are a few moving parts involved in this exercise:

- Using an Ubuntu Vagrant box, I’ll ask you to git clone a “Hello World NodeJS App”. It will come with its Dockerfile to be easily imaged/containerised.

- Then, you will Docker build your app and push the image into Docker Hub.

- Finally, I’ll ask you to go into your Kubernetes cluster, git clone a repo with a sample Pod definition and run it on your Kubernetes cluster.

Continue reading “Teaching How to Get started with Kubernetes deploying a Hello World App”

Teaching How to Design and Secure an API with Oracle API Platform

This blog is the second part of an end-to-end exercise that starts explaining the steps to clone a GitHub repository that contains an agnostic Medical Records application, built by us in NodeJS and which exposes REST API endpoints via a Swagger API-descriptor running locally on Swagger UI (all included as part of the repository). The previous part of this 2-blogs series also explains the steps required to run the MedRec NodeJS application on Docker containers either locally or in the Oracle Public Cloud. For more information about this first part, go here.

Moving to this second part, we are going to cover the following steps:

- Create an Apiary account used to Design APIs (API First approach) and create a new API Project using the existing MedRec Swagger API-definition.

-

We are going to spend a little bit of time playing with Apiary to feel comfortable in areas such as:

- Validating API definitions

- Testing API endpoints

- Switching across out-of-the-box Mock Servers and real Production MedRec service end-points.

-

Login to Oracle API Platform and configure an API, this includes:

- Enforcing Security and other policies.

- Deploy API and securing access level to on-premise and Cloud-based API Gateways.

- Publishing APIs into the API Developers Portal.

- Linking API to Apiary Swagger API-definition living document.

-

Login to API Developers Portal (API Catalog)

- Register a New Application

- Understanding the role of API Keys

- Reviewing MedRec API Documentation

- Registering to consume MedRec APIs

- Testing APIs.

- Understand API Analytics, consumption, metrics and monitoring dashboards.

Continue reading “Teaching How to Design and Secure an API with Oracle API Platform”