In this blog, I want to share my experience after having created many APIs using different approaches and technologies. I am going to encapsulate a simple process that will help you construct APIs, starting from scratch with an idea or requirement and move it all along to a happy consumption.

The best part of APIs is that they are microservices enablers, which implies that they are not technology prescriptive, so in this blog you will see that your APIs can be implemented using any technology or programming language.

I decided to use “Jokes” as the vehicle to explain the APIs construction best practices, mainly because jokes are a simple concept that anyone can relate to, but also because I want you to feel compelled to consume these APIs and by doing so, get a laugh or two.

My original idea with jokes is to:

- Get a random joke.

- Translate the joke to any language.

- Share the original or the translated joke with a friend via SMS.

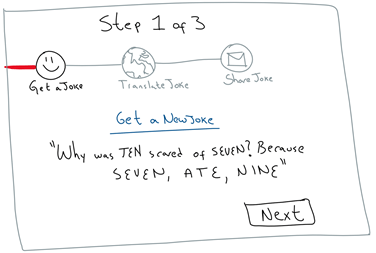

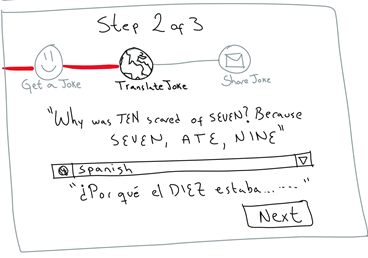

This is the high-level view of how our end solution will look like:

Before we get deep into the API construction process, let’s mention a couple of things about this diagram:

- I am going to get the jokes consuming an existing API in programmableweb.com

- I will use Google Translator to translate the jokes

- Twilio will be my channel to share the jokes with friends via SMS

- I decided to implement the core of my internal API orchestration with NodeJS, so that I could talk about the concept of microservices running on Docker containers.

- The API Gateway is the hero of the story. It is the main point of contact for external API consumers. It has the big muscles to secure with the right level of protection each of my APIs.

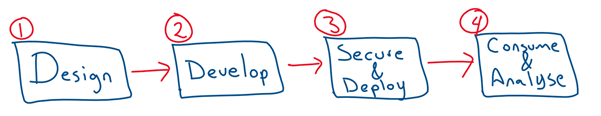

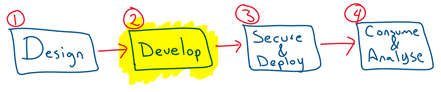

The process that I am going to explain to construct the APIs, consists of 4 stages:

Let’s cover each stage at a time.

Design

Designing your APIs is the first step in creating your APIs. It is driven by the API Designer(s).

In this phase, you need to make sure that your idea is properly captured in an API descriptor document.

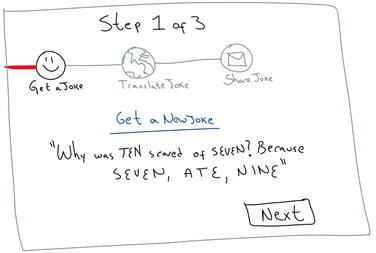

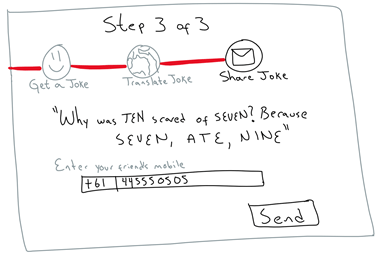

To start, you must ask yourself the following question: “What is that I want to achieve?” The best way that I have been able to answer this question, is with a simple “storyboard”. For example, in terms of our Jokes APIs, I put together something like this:

As you can see, this is not a technical step. The importance of the storyboard in the “Design phase” is to understand the “high level” need for having the APIs. That is, what is the ultimate requirement that the APIs will enable? In our case, it was about getting random jokes, having the ability to translate them and share them with friends via SMS. In a marketing campaign project, it could be, having the ability to extract in real time customers’ historical data from CRMs and other legacy systems and combine that with real time customer’s behaviour to offer discounts or instant services to end users, for example.

In this phase, it is required to interact with someone who understands well these requirements. In a real project, this involves business people e.g. marketing leads, digital leads, domain leads, etc.

Once you have the storyboard agreed and well understood by business and IT, the API designer(s) need to think about the “object definitions” upon which the APIs need to work on, as well as its “Access or Interaction Level” (Create Read, Update, Delete).

In our API Jokes example, it is simple:

- The object definition is: “Joke” (involves some text and an identifier)

-

The access or interaction level are:

- Ability to GET Jokes

- Ability to GET the current joke’s in a different language

- Ability to POST a joke notification via SMS

Other examples of “Object definitions” could be: Customers, Purchase Orders, Patients, Physicians, Service Requests, Complains, etc.

The next step is to document all of this in a document or file. I am not talking about a Word document, but an “API Definition Document”. If you are a techie and old school like me, think of the “API definition document” as a WSDL for SOAP services. There are multiple options out there, but most of them are starting to gravitate around either: Open API (aka Swagger) or Blueprint (via Apiary). This is your choice, in my case for the Jokes APIs, I decided to use the Open API (Swagger) definition.

Writing the API definition can be challenging the first time, especially if you are not familiar with the syntax. I would recommend that you use an editor that helps simplify this process and helps notify you every time you make a typo or other type of syntax mistake. After trying many options, the tool that I like using for writing my own API definitions is called Apiary (www.apiary.io) – You can create a free account. It will give you a browser based editor with templates, guidelines and tooling that will simplify writing the API definitions.

Have a look at the API definition that I wrote for the APIs for Jokes demo here. If you have any question creating your own, refer to the Open API (aka Swagger) reference documentation.

The Open API or Swagger API definition has mainly 4 sections:

1. General information of your APIs: This includes the version of swagger, description, title, contact, tags, host (where the API will run), schemes, etc.

2. Security: This determines whether your APIs should comply with any security policy. More of this later.

3. Paths: This represent the actual API URLs that you wish to design, together with its access level. For example, in our Jokes example, I chose:

- GET /jokes – Gets a new joke. It is expected to return 1 joke containing its identifier and the text’s joke.

- GET /jokes/{id}/translate?language=XXX – Gets a specific joke (by id). It is expected to return the Joke that I chose by id, but written in the selected language.

- POST /jokes/{id}?mobile=XXX&method=XXX&language=XXX – Sends an SMS with the selected joke by id, written in the selected language.

Notice that, this step is very open to interpretation and it is up to you how you design your APIs… Just make sure that whatever APIs you create satisfy the storyboard’s expectations.

As part of each Path definition, you should define the inputs and outputs. I strongly recommend that you simply reference your inputs and outputs based on “Object definitions” (mentioned next).

4. Object Definitions: In this section, you should write down the pre-work that you did around thinking about the “Object Definitions” previously. I do recommend that you spend enough time in this section … It is very important that you describe your “Object Definitions” properly, because then, you simply reference them from within the Paths Requests and Responses.

IMPORTANT: I cannot stress enough to use “Object Definitions” and wire them into your Paths Requests and Responses – This way, you will be able to reuse the same object definition across the paths. This will give your high flexibility, consistency, and reusability in your APIs. For example, if in the future you need to add a new attribute to one of your Object definitions, simply you add it in one place and automatically all paths will adapt to it. Also, there are many auto-generate tools that help simplify the creation of code to implement APIs, and all of them work based on the object definitions.

Using Apiary as the tool to write your API definition gives you many benefits, for example:

- It gives you a free text editor, while showing on the right a graphical representation of your API definition.

- It comes with auto-generated tools that can create API clients in dozens of programming languages, including Python, PHP, Nodejs, Java, Go, C#, etc.

- You can test your API definition on the right panel and Apiary will automatically run Mock services for you, allowing you to easily share and simulate your APIs as black boxes.

- Once you are done with the API definition, Apiary can provide you with Mock services endpoints, that can be shared with the API Consumers to start working for example on the actual consumable apps e.g. mobile app or web app.

- Apiary gives you a Test-Driven Development tool called “Dredd”, that helps you do your testing as unit testing or continuous integration testing… More of this coming next.

- It hooks up your API definition to a GIT repository

- It allows creating team of people and sharing your API Definition among the team

- Among others…

To get started with Apiary and Dredd, go to this other blog: https://redthunder.blog/2017/09/20/apiary-designed-apis-tested-using-dredd/ – Also, feel free to hit more online tutorials here.

Develop

Welcome to the second stage, “Developing your APIs”. This stage is usually handled by the group of “API Developers”, who are usually technical guys.

Here, you might get your hands dirty, while you build the logic behind your APIs. For example, in the case of the APIs for Jokes, we need to create a simple orchestration to invoke:

- A Jokes service. For this we are re-using an existing Programmable Web API that gets you Jokes.

- Google Translator to translate jokes

- Twilio Service to send SMS messages.

In other cases, you might have to consume data coming from CRMs, ERPs, Databases, SaaS Applications, etc.

As I said before, to build APIs, you can choose whatever technology or tooling you want or feel comfortable with. It could be directly in Java, C#, NodeJS or using vendor specific tools, such as Oracle SOA Suite or Oracle Service Bus. There are no right or wrong approaches, all can be valid if it makes sense. However, the most important question that you need to ask yourself is:

What systems do I need to integrate with?

This question is going to give you a few applications names, in our case:

- REST APIs from Programmable Web

- REST APIs from Google Translator

- REST APIs from Twilio

This sounds quite simple implementation, but in real projects, it can be more challenging. We might have to include applications like: Banking systems, SAP, EBS, JDE, People Soft, Workday, Salesforce, Databases, Files, etc.

You can save a lot of time, money and “hair”, if you look around for tools that give you out of the box adapters for the applications that you need to integrate with. Trust me, I’ve been doing integration for over 15 years and it is never simple to do real integrations from scratch, just relying on your skills as a programmer. It almost never ends well.

In my career, I have played with many vendor tools to integrate with applications and the ones that I use on a daily basis, are:

- NodeJS: If I need to create quick and easy prototypes or microservices – I tend to use NodeJS running on Docker containers in the cloud.

- Oracle Integration Cloud Service: If I need to integrate with enterprise grade applications, such as CRMs, ERPs, DBs, Files, etc across both on-premise and SaaS applications in the cloud – That’s a no brainer, I go with “Oracle Integration Cloud Service”. It can dramatically reduce the development time. See examples here: https://redthunder.blog/category/integration-cloud-service/

For the APIs for Jokes example, I decided to use NodeJS on Docker and I deployed it in Oracle Public Cloud using a PaaS service called Oracle Application Container Cloud Service. I find it, an very simple way to embrace microservices running on Docker containers for multiple programming languages (polyglot), including NodeJS. For more information check this link.

If you want to embrace full automation, as part of your API development, I also encourage you to analyse Developer Cloud Service, as it gives the ability to create and share private Git repositories, Agile tools, Hudson for Build, Test and deployment automation tools, Wikis, etc. In order to learn how to automate the deployment of the APIs for Jokes into Oracle Application Container Cloud Service, check this link.

You can download the whole APIs for Jokes project from GitHub here. It is not the best code, but it does the job.

Test Driven Development

Look, I am not a test enthusiast, I need to confess that the less test I do, the better for me. However, although I very much dislike it, I understand the importance of it, especially if your APIs are being taking a few iterations to be developed across a team of developers. Trust me, APIs can be a nightmare if not establishing the right level of discipline among the developer team.

In terms of APIs, it gets a bit worse, because as you have probably observed by now, there is nothing really making sure that the “API Descriptor” that the API Designer(s) built during the “Design phase”, is really being fully embraced by the API Developers. Just think about it, the API descriptor is a lone file, most of the time, totally disconnected to the implementation of the APIs.

There is however some good news. The same tool that I recommended using during the design phase, “Apiary”, comes with a Testing tool called “Dredd”, that makes our life much easier.

If you want to get your hands dirty with Dredd, go to this blog that my colleague David Reid wrote a few days ago. Also, feel free to hit the online tutorials here.

For now, it is enough to say that “Dredd”, will make sure to report any deviation in the “API implementation” with respect to the “API Descriptor” (Swagger in this case) … Then on top of that, you can create your own hooks and set of assertions to ensure the API development is also bug-free. After each test run, Dredd will present a full report in Apiary under your API, with the ability to fully automate the whole Continuous Integration Testing.

Also, the beauty of Dredd is that, similarly how you can build your API implementation in any language (polyglot), well, with Dredd you can also build your test cases in any language you want. Yes, you read it well, Dredd is programming language agnostic, by default it supports Go, NodeJS (JavaScript), Perl, PHP, Python, Ruby, Rust, etc… Or you can use your own favourite language. See more here.

Trust me, Dredd is going to bring sanity into your API Development. Make sure you look at it, before reinventing the wheel using other frameworks such as mocha, jasmine, chai, sinon, etc.

Secure and Deploy

Once your APIs are fully developed and you start thinking making them available for others to consume, you need to ask yourself 2 questions:

- What are the security policies that need to be enforced to guarantee the right level of protection to each of my APIs?

- Where do I need to run these APIs? I.e. on physical infrastructure inside a corporate datacentre or in some Public Cloud (AWS, MS, Oracle, Google, etc.)?

API Manager(s) govern this phase. These are not necessarily the developer team any more. In fact, in my opinion, applying security and other policy enforcement, should not be a developer’s task.

Let’s be honest, developers are great to develop code, but most of the times security is not their favourite cup of tea. I feel better, offloading from the developers’ shoulders, the responsibility to have to think about the right levels of protection when applying security measures to a code. Besides, this way, we don’t have to open development cycles every time we need to modify a security policy, which would otherwise result costly and inefficient.

Ideally, we want to apply and enforce security policies after development finished, once the APIs are fully developed and operational. This way, as the policies continue evolving in the future, we are going to gain flexibility, agility and save a lot of money along the way.

In the current world, this task is normally controlled by the API Gateways, which are either hardware or software appliances that were born to protect APIs from malicious access or attacks. They have in their DNA, lots of policies that with a click of a button can be enforced, without having to modify the underlying API implementation.

There are lots of different API Gateways out there, open source and commercial options. In my day to day job, I like using the Oracle API Gateway, that comes as part of the Oracle API Platform. The reason I prefer the Oracle API Gateway, is because while it is fully managed from a cloud based console, the actual API Gateway

can be installed anywhere, either on physical compute inside a corporate datacentre or on any public cloud (AWS, MS Azure, Oracle, Google, etc.)

This simplifies a lot the whole API Management process. That means that from a central Web console, the APIs can be pushed out to be deployed into any API Gateway instances running anywhere in the world connected to my account. For example, I could have some applications running on Amazon cloud, others in MS Azure and some others on-premise, inside my own corporate datacentre. Then, as an API Manager, I can simply decide to push my APIs to be deployed and run on all these different locations. Then, once they are pushed out and deployed locally on each API Gateway, I can gather analytics from all these different points from my API Management Console.

This architecture is truly fascinating!

For more information about applying policies and deploying your APIs into API Gateways, please refer to this other blog that I wrote some time ago.

Consume and Analyse

This is the last step of our API journey driven by both the API Consumer(s) and heavily scrutinised by the API Manager(s). It is one of the most important stages. Here, we quickly assess if all the effort in constructing our APIs has worked.

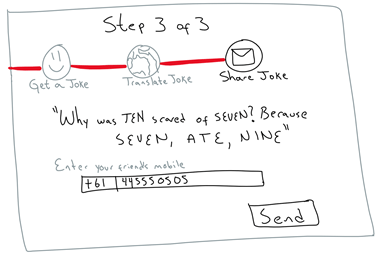

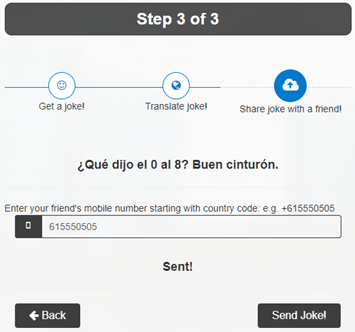

Also in this phase, you want to complete your original consumable application (as per your original storyboard) and give it a quick road test. The idea is to finally fulfil your original storyboard purpose. In our case, for Jokes APIs, I decided to create a quick HTML5 application that consumes the Jokes APIs in a way close to my original storyboard.

From my original storyboard:

To my final consumable application:

This stage has commonly a lot of eyes and scrutiny. Maybe you will encounter that a great idea is not that great after all or that something unexpected resulted in a great hit. You don’t know until you let your APIs being consumed by the developers community and end users, only after, you can assess the consumption effectiveness. The key is to streamline your whole API development, so that you can road test your ideas as quickly as possible, fail fast, adjust, and try it again.

In a real API project, this stage is closely followed by the business stakeholders, who need to know the effectiveness of a marketing campaign or the viability of a new product. However, this stage does not only help to assess effectiveness and adjust direction, remember that in the current world, APIs help generate new ways of revenue to companies, so this stage is also useful for well-established products, so that businesses can obtain full historical visibility of their customers consumption, perhaps to invoice them back with some service fees.

So far, after just a few hours after deployment, I can see via the Oracle API Platform Analytics tools, that the APIs for Jokes have currently being used 66 times with a larger distribution from 2pm to 9pm. Not bad for a silly idea, huh? Let’s check again tomorrow when the other continents wake up.

If you want to play with these Jokes APIs or more information about APIs in general, go to: http://apismadeeasy.cloud

I hope that you found some structure in this blog that helps you construct your own APIs. If you need any piece of advice around your own API strategies, feel free to contact me directly via LinkedIn at https://www.linkedin.com/in/citurria/

Thanks for your time.