Sadly log file identifier icsdiagnosticlog has been deprecated from late November 2020. icsflowlog, icsauditlog are still available so you should be able to apply the same pattern used in this blog to manage your OIC instance log file.

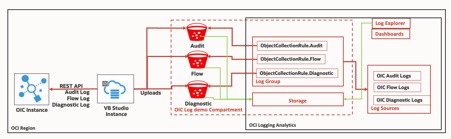

I would like to show how OIC log management can be achieved with OCI Object Storage (I’ll call it bucket) and OCI Logging Analytics, Visual Builder Studio (used to be Developer Cloud, I’ll call it VB Studio).

Interestingly I’m not going to use OIC to download log files, either to ingest log data from OCI Object Storage. VB Studio will be my tool to do sourcing log files and feeding it to bucket – I’ll be taking advantage of unix shell and oct-cli from VB Studio. Then OCI Logging Analytics will ingest log data from bucket based on cloud event.

Here is the simple architecture what I’m going to do. In this architecture, OIC instance and VB Studio instance can be located different OCI region from the where bucket and OCI Logging Analytics belong to as it’s using REST API and OCI-CLI. OIC

Firstly, we know OIC is providing REST API for download log files in compressed format. Anyone knows OIC would say “sure, let’s create an integration to download log files using given REST API and unzipped it if required. Then create another one to push zipped file or unzipped files onto file server or FTP server, bucket”. Well in this blog I will go for using curl command to download log file and etc. Why? Because it’s just a couple of commands for me to achieve it.

- curl -X GET -u ‘me: mypassword’ -o ‘ icsdiagnosticlog.zip’ https://target-url.com/ic/api/integration/v1/monitoring/logs/icsdiagnosticlog

- unzip icsdiagnosticlog.zip –d logdir

It can be doable by OIC but I decided to go a bit different way and I know VB Studio, which provides unix shell capability with oci-cli from job configuration.

So, I’m ready to provide log file to anywhere from VB Studio.

Again, in this blog I chose bucket other than FTP or file server as it comes with all the features I need;

- Secure place to save log with your own key (https://docs.cloud.oracle.com/en-us/iaas/Content/Object/Tasks/usingyourencryptionkeys.htm)

- Archive it after some time to save cost (https://docs.cloud.oracle.com/en-us/iaas/Content/Object/Tasks/usinglifecyclepolicies.htm)

- Ingest log data from bucket for OCI Logging Analytics (https://docs.cloud.oracle.com/en-us/iaas/logging-analytics/doc/collect-logs-oci-object-storage-bucket.html)

Here is the oci-cli command from unix shell tp upload file to bucket:

os object put -ns $NAMESACE -bn $BUCKETNAME --name $FILENAME--file $PATH_TO_FILE

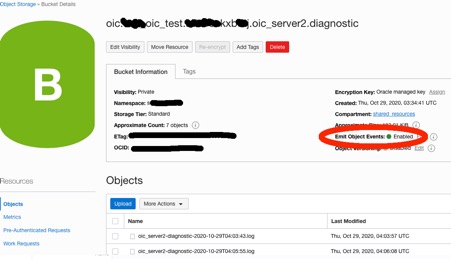

While creating bucket on your OCI compartment, make sure you have certain bucket naming standard. For me, I tried to create bucket name with information from namespace and OIC instance name, etc so it can be retrieved dynamically from unix shell script. For example, I use following bucket naming standard:

oic.oic-instance-name.namespace.oic_server?.[audit|flow|diagnostic] #eg. oic.jpa-test.djskdjs78798.oic_server1.flow

Log file name needs to be OCI Logging Analytics preferred format so I make it simple:

filename-%Y-%m-%dT%H:%M:%S.log #eg. ics-audit-2020-11-02T11:11:11.log

Also make sure bucket has been enabled to emit object event.

Half of tasks is ready from VB Studio.

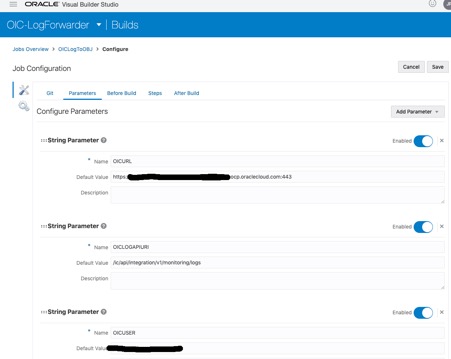

Here is the unix shell script with oci-cli which is used from VB Studio, variables are defined from parameter for this job or dynamically;

#get ns export NAMESPACE=oci os ns get | jq ‘.data’ | sed ‘s/^”\(.*\)”.*/\1/’#get cur date time export TS=”$(date +’%Y-%m-%dT%H:%M:%S’)” mkdir -p $WORKSPACE/log curl -G -X GET -u $OICUSER:$OICPASSWORD -o $WORKSPACE/log/icsdiagnosticlog.zip –data-urlencode “$QSTR” $OICURL/$OICLOGAPIURI/icsdiagnosticlog curl -G -X GET -u $OICUSER:$OICPASSWORD -o $WORKSPACE/log/icsauditlog.zip –data-urlencode “$QSTR” $OICURL/$OICLOGAPIURI/icsauditlog unzip -d $WORKSPACE/log $WORKSPACE/log/icsdiagnosticlog.zip export PODNAME=cat $WORKSPACE/log/environment.txt | grep “POD Name” | sed -n ‘s/.*: //p’unzip -o -d $WORKSPACE/log $WORKSPACE/log/icsauditlog.zip rm $WORKSPACE/log/*.zip cd $WORKSPACE/log export BN_PREFIX=oic.$PODNAME.$NAMESPACE #when 1st node eg. oic_server1 export NODE=ls -d *_server1#when ics-audit.log exists export LOG_FN=ics-audit [ -s $NODE/$LOG_FN.log ] && { mv $NODE/$LOG_FN.log $NODE/$LOG_FN-$TS.log;oci os object put -ns $NAMESPACE -bn $BN_PREFIX.$NODE.audit –name $LOG_FN-$TS.log –file $NODE/$LOG_FN-$TS.log; } #when ics-flow.log exists export LOG_FN=ics-flow [ -s $NODE/$LOG_FN.log ] && { mv $NODE/$LOG_FN.log $NODE/$LOG_FN-$TS.log;oci os object put -ns $NAMESPACE -bn $BN_PREFIX.$NODE.flow –name $LOG_FN-$TS.log –file $NODE/$LOG_FN-$TS.log; } #when x-diagnostic.log exists export LOG_FN=$NODE-diagnostic [ -s $NODE/$LOG_FN.log ] && { mv $NODE/$LOG_FN.log $NODE/$LOG_FN-$TS.log;oci os object put -ns $NAMESPACE -bn $BN_PREFIX.$NODE.diagnostic –name $LOG_FN-$TS.log –file $NODE/$LOG_FN-$TS.log; } #when 2st node eg. oic_server2 export NODE=ls -d *_server2#when ics-audit.log exists export LOG_FN=ics-audit [ -s $NODE/$LOG_FN.log ] && { mv $NODE/$LOG_FN.log $NODE/$LOG_FN-$TS.log;oci os object put -ns $NAMESPACE -bn $BN_PREFIX.$NODE.audit –name $LOG_FN-$TS.log –file $NODE/$LOG_FN-$TS.log; } #when ics-flow.log exists export LOG_FN=ics-flow [ -s $NODE/$LOG_FN.log ] && { mv $NODE/$LOG_FN.log $NODE/$LOG_FN-$TS.log;oci os object put -ns $NAMESPACE -bn $BN_PREFIX.$NODE.flow –name $LOG_FN-$TS.log –file $NODE/$LOG_FN-$TS.log; } #when x-diagnostic.log exists export LOG_FN=$NODE-diagnostic [ -s $NODE/$LOG_FN.log ] && { mv $NODE/$LOG_FN.log $NODE/$LOG_FN-$TS.log;oci os object put -ns $NAMESPACE -bn $BN_PREFIX.$NODE.diagnostic –name $LOG_FN-$TS.log –file $NODE/$LOG_FN-$TS.log; } echo done

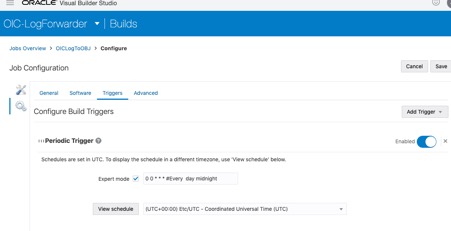

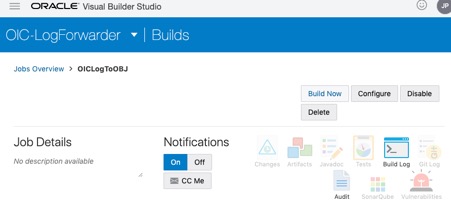

Forgot to mention that running job with scheduler from VB Studio is straight forward as below. Just set trigger based on typical crontab schedule format.

Now it’s time for another half to enable log data collection from bucket.

It’s mandatory for you to go through prerequisites configuration tasks documentation (https://docs.cloud.oracle.com/en-us/iaas/logging-analytics/doc/prerequisite-configuration-tasks.html) for OCI Logging Analytics and steps to enable log dat collection from bucket (https://docs.cloud.oracle.com/en-us/iaas/logging-analytics/doc/collect-logs-oci-object-storage-bucket.html) which includes setting Log Group, Log Source and Object Collection Rule, etc. Log entity is not required for this use case.

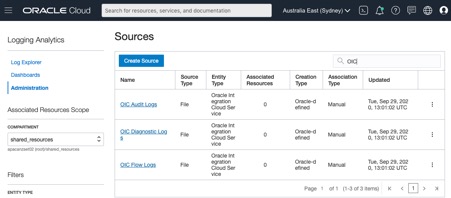

OCI Logging Analytics comes with predefined Log Sources as below and it worked fine for OIC logs I uploaded to bucket;

To enable log data collection from bucket by OCI Logging Analytics, Object Collection Rule has to be created for each bucket and it’s one off task based on OCI-CLI as below. I’ve used below example JSON payload to create data file for the rule with “OIC Audit Logs”. As you can see I’ve extended the naming standard used for bucket to Object Collection Rule.

After all the rules creation, make sure they are active using OCI-CLI command such as “oci log-analytics object-collection-rule list”.

We’ve got everything ready so let’s kick off VB Studio job by submitting “Build Now”.

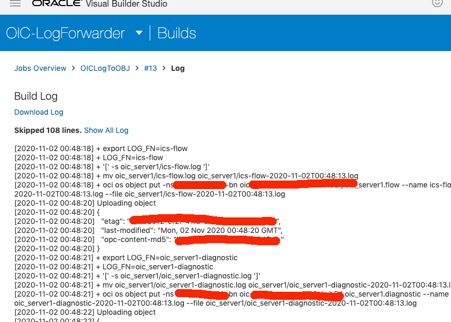

Build done so we can check from “Build Log”, remember this can be done by scheduled trigger.

So log files are downloaded and unzipped, uploaded onto relevant bucket with timestamp on its filename. Based Objection Collection Rules, all the log data is now available to OCI Logging Analytics.

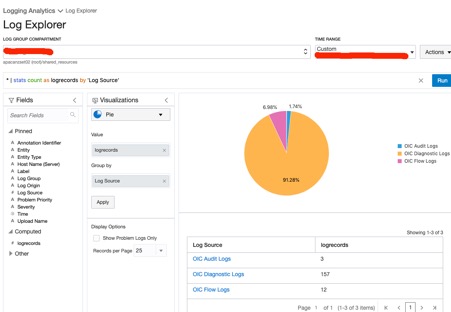

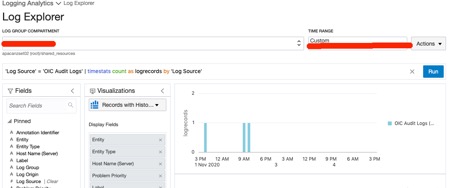

Let’s go to OCI Logging Analytics from OCI console. Then choose Log Explorer with right log group compartment, and right time range.

Hooray! We’ve got OIC logs, three different type of sources such as audit, flow, diagnostic, available to Log Explorer.

Click to “OIC Audit Logs” segment from the pie will present audit records.

Thanks for reading this blog.

To do – Playing with Dashboards from OCI Logging Analytics. I’ll leave it to you for having fun J

Awesome Blog Jin. Keep doing great work !!!

LikeLiked by 1 person

Awesome article, but I’ve just discovered that icsdiagnosticlog api endpoint is no more documented and deprecated by Oracle. Also from the console, it is not possible anymore to download diagnostic log starting from the November release of OIC. The support confirmed that diagnostic log is no more necessary in favour of new and improved tracing and tracking features. But I totally disagree.. 😦

LikeLiked by 1 person

Excellent comment Andrea and happy new year 2021. Blog has been updated.

LikeLike

Thank you for tour blog. Quite informative. I have a different view, Every integration tool should allow a troubleshooting mechanism. Unfortunately the icslog and the activity stream has a limit of 10Mb which lead to purged data, this is a pain. In addition I find this approach unecessary complicated, the logs are already collected in OIC Just increase the allowed size or a better log management from OIC & charge based on storage as of you are doing for DBaaS.customer want to reduce the number of tools & platforms & connections & maintenance.

LikeLike