Try though we might to shift everything to a glorious set of stateless services, there are still plenty of scenarios in which an application might need to store some state, commonly to track some information about a user and that user’s session. In these cases, an in-memory cache is a powerful addition to an application, and is nearly indispensable to an elastic cloud architected app which is expected to be able to scale horizontally on-demand, to allow information to be shared between application instances, avoiding the need for load balancer complexity to implement sticky-sessions. Application Container Cloud Service added the ability for applications to add a Caching Service binding in the 17.1.1 drop, and in this blog post we will explore how to leverage this capability in a Node.js application.

In a previous blog post, I wrote about extending OAuth services in order to provide out-of-band authorisation. As part of that flow, authorisation requests are made and identified by a request id, then that request is resolved at a later time. In order to facilitate this, these requests need to be held temporarily until resolved, and given they are timed out after a handful of minutes, writing them to persistent storage is probably overkill. This seemed like a perfect scenario to in which to utilise the ACCS Caching Service, sharing views of data across multiple application instances; and this post captures the work that was done around that. Though this scenario is relatively straightforward, the Caching service can also accommodate much more complicated scenarios where multiple different applications requiring views of the same data across multiple named caches.

Setting up a Caching Service Instance

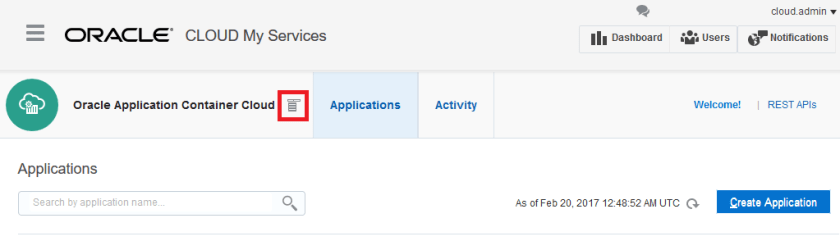

First step is to create our Caching Service instance. The Caching Platform Service doesn’t have a link on the Service Dashboard yet, so the process of getting to the Caching Service management page is a little roundabout. From the Application Container Cloud Service console, click the ‘select service type’ button which is very clearly labelled…

From there, the link to the Caching service is available.

Update: It seems that in the 17.1.5 update, the Caching Service was moved from the Platform Services heading to the Application Container Cloud heading, where it is now known as ‘Application Cache’.

When you are creating a service, you have a few standard options, such as Name and Description; as well as options which will affect the topology of your Caching Service: Deployment Type and Cache Capacity. The ‘Basic’ Deployment type is designed as a development deployment, and creates a single node in a single container. This is fine for testing, but obviously lacks resilience. The Recommended Deployment type creates a cluster of at least three caching instances, and any data written to the caching service is replicated to at least two nodes. You can also tweak the Caching Capacity, which defines the maximum size of the Caching Service. These can be scaled later, as required by load or storage requirements.

At the time of writing, the maximum Cache Capacity is 20GB for a Caching Service instance of Recommended type, and 5GB for a Caching Service in Basic topology, which should be plenty for most micro-service oriented developments.

This is probably as good time to point of the difference between a Caching Service instance and a Cache, as I keep catching myself writing cache when I mean to refer to a service instance. A Caching Service Instance represents a cluster of caching nodes, with capacity to store data in multiple named caches. When you perform a caching operation, you specify the cache on which it is operating. Caches are created dynamically, so if you put to a cache which doesn’t exist yet, it will be created. A Caching Service instance can have any number of named caches, for different purposes; for example, a retail application might have a Customers cache, an Inventory cache and a Session cache, all within the same Caching Service Instance, and different applications could access the Inventory cache if they all bound to the same Caching Service instance and specified the Inventory cache by name.

Developing your Application with Caching

Applications running in ACCS communicate with the caching service by invoking the Caching Service REST API, which is documented here.

If you are using Node.js, you can take advantage of the time I spent trying to understand how to use the Caching API in my own applications, as I built an npm module which abstracts away all of the complexity of working with Caches in ACCS. The module is ‘accs-cache-handler’ and is available here.

Invoking Caching services using the node module is super simple, just using standard caching methods; get, put and delete, and all object serialisation and deserialization is handled for you. e.g.

var Cache = require('accs-cache-handler');

var objCache = new Cache("MyFirstACCSCache");

//Do Stuff

var key = customerID;

var objToStore = { name: "John Smith",

age: 45,

isCustomer: true

};

//Put an object

objCache.put(key, objToStore, function(err){

if(err){

console.log(err);

}

//Retrieve the object

objCache.get(key, function(err, result){

if(err){

console.log(err);

}

console.log(result.name); //"John Smith"

});

});

Other applications can also access this data if they instantiate a Cache object with the name “MyFirstACCSCache”, as this is the underlying named Cache in the Caching Service Instance. There is a bit more functionality to the module, but ultimately it is a cache so get, put, and delete get you a long way.

The module also supports testing code which uses the ACCS Caching Service without having to deploy it to ACCS, which was one of my pet peeves, as deployment takes a little bit of time, and the delay for testing tiny code changes was a little irksome. This is handled by falling back on a local object store if it doesn’t look like the code is running in ACCS (i.e. the environment variable set by the Cache Service binding isn’t set in the environment), with all of the same interfaces and behaviour.

If you are using Java or PHP, you will likely have to get pretty familiar with the above API reference, as well as understand how to access the caching service host, so read on. When an application is set up with a binding to a Caching Service, an environment variable is set which provides the hostname for the caching service to be used as part of the base URI for the Caching Service REST API. This environment variable is CACHING_INTERNAL_CACHE_URL and assembly of the base URI is as follows:

http:// <CACHING_INTERNAL_CACHE_URL> :8080/ccs/ <path as per docs>

The {cacheName} path variable in the documents is your Named Cache, and the cache is created dynamically on the first PUT or POST. Other applications wishing to access the objects created in that named cache can use the same URL to obtain the stored objects.

Unfortunately the Caching API is not accessible externally, which makes it difficult to test your code locally. As a workaround for this, I wrote a little Node.js application which provides pass-through functionality to the ACCS Caching API, which allows you to test locally against the services exposed through the pass-through application, by refactoring your URL when running locally. I found it was enough to get started understanding how the API handles data.

The pass through application is available here, and is provided as-is. It hasn’t been extensively tested, and I can’t guarantee it will work with all payload types, but hopefully will at least get you started.

Deploying your application

Unfortunately, as of writing, caching services cannot be bound to an application at deployment time when deploying through the ACCS UI. As such, after deploying your application, the binding must be added from the Deployments tab, in application management.

Update: The ACCS 17.1.5 update added the ability to bind to a cache at application creation time, you can now select an available cache from a drop-down on the application creation screen.

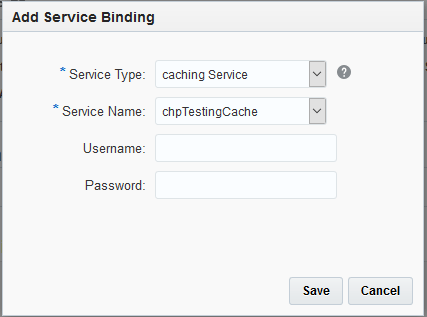

In the ‘Add Service’ popup, you can specify the type of service as ‘Caching’ which allows you to select a Caching Service Instance that was created earlier.

After applying the changes and restarting, the internal networking is setup to allow access to the cache via the hostname set in the CACHING_INTERNAL_CACHE_URL environment variable (which can be viewed by checking the ‘Show All Variables’ checkbox in that same screen).

Known Issue in ACCS version 17.1.3: In 17.1.3, the caching hostname sometimes fails to resolve if ‘isClustered’ is not set in manifest.json. As a result of this, ensure that your manifest.json looks similar to this (for Node.js).

{

"runtime":{

"majorVersion":"6"

},

"command": "node app.js",

"isClustered": "true"

}

At present, an Application can only have one Caching Service binding, so consideration of how data ought to be segregated between Caching instances is important. For most usecases, it shouldn’t matter, and in a micro-services architecture, applications which straddle these data segregation zones should probably be interacting via service interfaces rather than interacting with the data layer directly.

Caching adds a powerful capability to maintain stateful information across multiple application instances, and share stateful information across multiple applications running on ACCS. This is a necessity to deliver elastic scalable applications, such as for my authorisation services where load will vary widely depending on time of day, popularity of third party offerings, etc. and I wish to add capacity as required, without worrying about load balancing requirements, and ensuring authorisation requests are accessible to whichever instance happens to be invoked at the time. Adding Caching services to applications deployed on Application Container Cloud is relatively straightforward, and I have endeavoured to make it even easier for Node.js developers by developing a module to provide even simpler interfaces and the ability to test ACCS Caches offline. If you are a Java or PHP developer, then use of ACCS Caching might require a little more consideration at design time, but the decision to offload state to a shared cache is paid off many times over with a much more scalable application.

One thought on “Developing with ACCS Caching Services”