We have covered multiple blogs on how to use Terraform to help automate the provisioning of environments and treat your Infrastructure as Code. Until now, for PaaS stacks, we have used Terraform together with Oracle PaaS Service Manager (PSM) CLI. This gives us great flexibility to script our own tailored PaaS stacks the way we want them. However, with flexibility comes responsibility, and in this case, if we choose to use PSM CLI, it’s up to us to script the whole provisioning/decommission of components that make up the stack. As well as what to do if we encounter an error half-way through, so that we leave things consistently.

A simpler way to provision PaaS stacks is by making use of Oracle Cloud Stack, that treats all components of the stack as a single unit, where all sub-components are provisioned/decommissioned transparently for us. For example, Oracle Integration Cloud (OIC) stack, is made of Oracle DB Cloud Service (DBCS), Integration Cloud Service (ICS), Process Cloud Service (PCS), Visual Builder Cloud Service (VBCS), IaaS, storage, network, etc. If we use Oracle Cloud Stack to provision an environment, we only have to pass a YAML template with the configuration of the whole stack and then, Cloud Stack handles the rest. Pretty awesome huh?

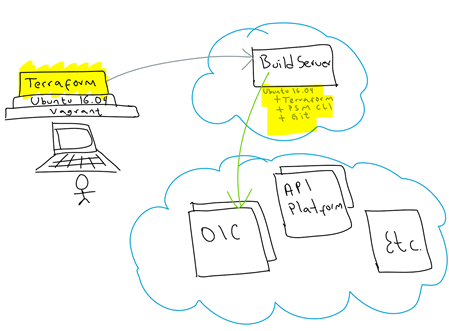

Similarly, as we have done in the past, we are going to use a “Build Server”. This will be used as a platform to help us provision our PaaS stacks. When provisioning this “Build Server”, I will add all the tooling it requires as part of its bootstrap process. For this, I am using Vagrant + Terraform, so that I can also treat my “Build Server” as “infrastructure as code” and I can easily get rid of it, after I built my target PaaS stack.

This is a graphical view of what I will be doing in this blog to provision an OIC stack via Cloud Stack:

Continue reading “Teaching How to Provision Oracle Integration Cloud (OIC) with Cloud Stack and Terraform”