In November 2018, I had the privilege to attend the Australian Oracle User Group national conference “#AUSOUG Connect” in Melbourne. My role was to have video interviews with as many of the speakers and exhibitors at the conference. Overall, 10 interviews over the course of the day, 90 mins of real footage, 34 short clips to share and plenty of hours reviewing and post-editing to capture the best parts.

In November 2018, I had the privilege to attend the Australian Oracle User Group national conference “#AUSOUG Connect” in Melbourne. My role was to have video interviews with as many of the speakers and exhibitors at the conference. Overall, 10 interviews over the course of the day, 90 mins of real footage, 34 short clips to share and plenty of hours reviewing and post-editing to capture the best parts.

Category: DevOps

Teaching How to Get Started with Oracle Container Engine for Kubernetes (OKE)

In a previous blog, I explained how to provision a Kubernetes cluster locally on your laptop (either as a single node with minikube or a multi-node using VirtualBox), as well as remotely in the Oracle Public Cloud IaaS. In this blog, I am going to show you how to get started with Oracle Container Engine for Kubernetes (OKE). OKE is a fully-managed, scalable, and highly available service that you can use to deploy your containerized applications to the cloud on Kubernetes.

Continue reading “Teaching How to Get Started with Oracle Container Engine for Kubernetes (OKE)”Teaching How to Provision Oracle Integration Cloud (OIC) with Cloud Stack and Terraform

We have covered multiple blogs on how to use Terraform to help automate the provisioning of environments and treat your Infrastructure as Code. Until now, for PaaS stacks, we have used Terraform together with Oracle PaaS Service Manager (PSM) CLI. This gives us great flexibility to script our own tailored PaaS stacks the way we want them. However, with flexibility comes responsibility, and in this case, if we choose to use PSM CLI, it’s up to us to script the whole provisioning/decommission of components that make up the stack. As well as what to do if we encounter an error half-way through, so that we leave things consistently.

A simpler way to provision PaaS stacks is by making use of Oracle Cloud Stack, that treats all components of the stack as a single unit, where all sub-components are provisioned/decommissioned transparently for us. For example, Oracle Integration Cloud (OIC) stack, is made of Oracle DB Cloud Service (DBCS), Integration Cloud Service (ICS), Process Cloud Service (PCS), Visual Builder Cloud Service (VBCS), IaaS, storage, network, etc. If we use Oracle Cloud Stack to provision an environment, we only have to pass a YAML template with the configuration of the whole stack and then, Cloud Stack handles the rest. Pretty awesome huh?

Similarly, as we have done in the past, we are going to use a “Build Server”. This will be used as a platform to help us provision our PaaS stacks. When provisioning this “Build Server”, I will add all the tooling it requires as part of its bootstrap process. For this, I am using Vagrant + Terraform, so that I can also treat my “Build Server” as “infrastructure as code” and I can easily get rid of it, after I built my target PaaS stack.

This is a graphical view of what I will be doing in this blog to provision an OIC stack via Cloud Stack:

Teaching How to Get started with Kubernetes deploying a Hello World App

In a previous blog, I explained how to provision a new Kubernetes environment locally on physical or virtual machines, as well as remotely in the Oracle Public Cloud. In this workshop, I am going to show how to get started by deploying and running a Hello World NodeJS application into it.

There are a few moving parts involved in this exercise:

- Using an Ubuntu Vagrant box, I’ll ask you to git clone a “Hello World NodeJS App”. It will come with its Dockerfile to be easily imaged/containerised.

- Then, you will Docker build your app and push the image into Docker Hub.

- Finally, I’ll ask you to go into your Kubernetes cluster, git clone a repo with a sample Pod definition and run it on your Kubernetes cluster.

Continue reading “Teaching How to Get started with Kubernetes deploying a Hello World App”

Teaching How to quickly provision a Dev Kubernetes Environment locally or in Oracle Cloud

This time last year, people were excited talking about technologies such as Mesos or Docker Swarm to orchestrate their Docker containers. Now days (April 2018) almost everybody is talking about Kubernetes instead. This proves how quickly technology is moving, but also it shows that Kubernetes has been endorsed and backed up by the Cloud Giants, including AWS, Oracle, Azure, (obviously Google), etc.

At this point, I don’t see Kubernetes going anywhere in the coming years. On the contrary, I strongly believe that it is going to become the default way to dockerise environments, especially now that it is becoming a PaaS offering with different cloud providers, e.g. Oracle Containers. This is giving the extra push to easily operate in enterprise mission critical solutions, having the backup of a big Cloud Vendor.

Teaching How to use Terraform to automate Provisioning of Oracle Integration Cloud (OIC)

In a previous blog, I explained how to treat your Infrastructure as Code by using technologies such as Vagrant and Terraform in order to help automate provisioning and decommissioning of environments in the cloud. Then, I evolved those concepts with this other blog, where I explained how to use Oracle PaaS Service Manager (PSM) CLI in order to provision Oracle PaaS Services into the Cloud.

In this blog, I am going to put together both concepts and show how simply you can automate the provisioning of Oracle Integration Cloud with Terraform and PSM CLI together.

To provision a new PaaS environment, I first create a “Build Server” in the cloud or as my boss calls it a “cockpit” that brings all the required bells and whistles (e.g. Terraform, PSM CLI, GIT, etc) to provision PaaS environments. I will add all the tooling it requires as part of its bootstrap process. To create the “Build Server” in the first place, I am using Vagrant + Terraform as well, just because I need a common place to start and these tools highly simplify my life. Also, this way, I can also treat my “Build Server” as “infrastructure as code” and I can easily get rid of it after I built my target PaaS environments and save with that some bucks in the cloud consumption model.

Once I build my “Build Server”, I will then simply git clone a repository that contains my scripts to provision other PaaS environments, setup my environment variables and type “terraform apply”. Yes, as simple as that!

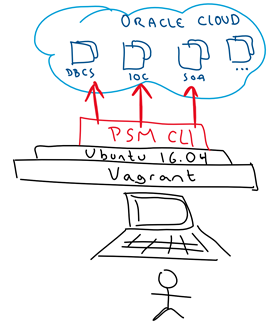

This is a graphical view of what I will be doing:

Teaching How to use Oracle PaaS Service Manager (PSM) CLI to Provision Oracle PaaS environments

In this blog, I am going to get you started with Oracle PaaS Service Manager (PSM) CLI – A great tool to manage anything API-enabled on any Oracle PaaS Service or Stack. For example, provisioning, scaling, patching, backup, restore, start, stop, etc.

It has the concept of Stack (multiple PaaS services), what means that you can very easily provision and manage full Stacks, such as Oracle Integration Cloud (OIC), that combines multiple PaaS solutions underneath, e.g. ICS, PCS, VBCS, DBCS, etc.

For this, we are going to use a pre-cooked Vagrant Box/VM that I prepared for you, so that you don’t have to worry about installing software, but moving as quickly as possible to the meat and potatoes.

This is a graphical view of what we are going to do:

Teaching How to push your code into multiple Remote Git repositories

Very quickly Git has become one of the most common ways to maintain and manage source code. It is easy to use, fast, reliable and most modern CI/CD tooling support it. GitHub also makes it easy to anyone who wants to share code, to do it in a free or very inexpensive way. Many companies however, also look for ways in which they can maintain their own private repositories as an enterprise-grade solution, like Developer Cloud Service (DevCS), the one Oracle gives for free with any IaaS or PaaS service.

In this blog I am going to show you how to push your code into any number of remote Git repositories. For example, you can have your private repository in DevCS and choose to also publish them into another GitHub remote repository (public or private) in GitHub.

This is the high-level idea:

- Let’s create a new Git repo in DevCS

- Let’s create a repo in GitHub

- Let’s clone DevCS repo locally on my laptop

- Let’s push the code to DevCS Git repo

- Let’s push the code to GitHub repo.

Continue reading “Teaching How to push your code into multiple Remote Git repositories”

Teaching How to use Terraform to Manage Oracle Cloud Infrastructure as Code

Infrastructure as Code is becoming very popular. It allows you to describe a complete blueprint of a datacentre using a high-level configuration syntax, that can be versioned and script-automated. This brings huge improvements in the efficiency and reliability of provisioning and retiring environments.

Terraform is a tool that helps automate such environment provisioning. It lets you define in a descriptor file, all the characteristics of a target environment. Then, it lets you fully manage its life-cycle, including provisioning, configuration, state compliance, scalability, auditability, retirement, etc.

Terraform can seamlessly work with major cloud vendors, including Oracle, AWS, MS Azure, Google, etc. In this blog, I am going to show you how simple it is to use it to automate the provisioning of Oracle Cloud Infrastructure from your own laptop/PC. For this, we are going to use Vagrant on top of VirtualBox to virtualise a Linux environment to then run Terraform on top, so that it doesn’t matter what OS you use, you can quickly get started.

This is the high-level idea:

Continue reading “Teaching How to use Terraform to Manage Oracle Cloud Infrastructure as Code”

Teaching how to use Vagrant to simplify building local Dev and Test environments

The adoption of Cloud and modern software automation, provisioning and delivery techniques, are also requiring a much faster way to simplify the creation and disposal of Dev and Test environments. A typical lifespan of a Dev environment can go from minutes to just a few days and that’s it, we don’t need it anymore.

Regardless of whether you use a Windows, Apple or Linux based PC/laptop, virtualisation of environments via Virtual Machines, help with this problem, besides it leaves your host OS clean. Vagrant takes VMs to the next level, by offering a very simple, lightweight and elegant solution to simplify such Virtual Machine life-cycle management, easy way to bootstrap your software/libraries requirements and sharing files across your host and guest machines.

In this blog I am going to show you how to get started with Vagrant. You will find it a very useful to quickly create and destroy virtual environments that help you develop and test your applications, demystify a particular topic, connecting to cloud providers, run scripts, etc.

For example, typical scenarios I use Vagrant for include: Dev and Test my NodeJS Applications, deploy and test my Applications on Kubernetes, run shell scripts, SDKs, use CLIs to interact with Cloud providers e.g. Oracle, AWS, Azure, Google, etc. All of this from my personal laptop, without worrying about side effects, i.e. if I break it, I can simply dispose the VM and start fresh.

I can assure you that once you give it a go, you will find it hard to live without it. So, let’s wait no more…

Continue reading “Teaching how to use Vagrant to simplify building local Dev and Test environments”