Customisation is essential part of any SaaS implementation to capture unique business needs. In Salesforce SaaS application also, there could be several use-cases where user might need to create a new Custom Object or add custom fields into existing Standard Object such as Contact, Account and Organisation etc. In this blog I will be showing how can we add Custom Object e.g. CochOrder which can have multiple Custom Fields e.g. Order Number, Shipping Cost, Source Region, Target Region and Total Amount etc. and can update that Custom Object fields using Oracle Integration Cloud (OIC) Salesforce adapter. I must recommend you to read my other blog which I have wrote to cover adding Custom Fields to existing Standard Object such as Contact, Account and Organisation etc. Most of the steps is going to same as previous blogs, so I am not going to repeat them here, instead will be only focusing only new changes related to Custom Objects.

Before, I go into deep drive, just want to highlight the core objective of this blog to show Salesforce configuration and OIC Salesforce adapter configuration, I am assuming reader has already basis understanding of OIC product features such as Connection, Integration, mapping and deployment.

My colleague had already covered Salesforce Inbound and Outbound integration using Oracle Integration Cloud Salesforce Adapter. So, I might not be repeating few steps which already been covered in this blog as well. if you doing Salesforce Integration first time, then its recommended to review these blogs before you proceed to read this blog.

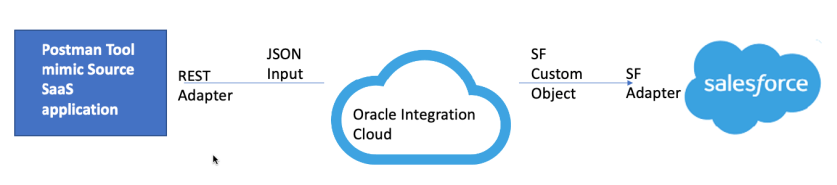

So let’s do deep dive now. Below are the high levels flow and steps which needs to be performed to achieve desired result.